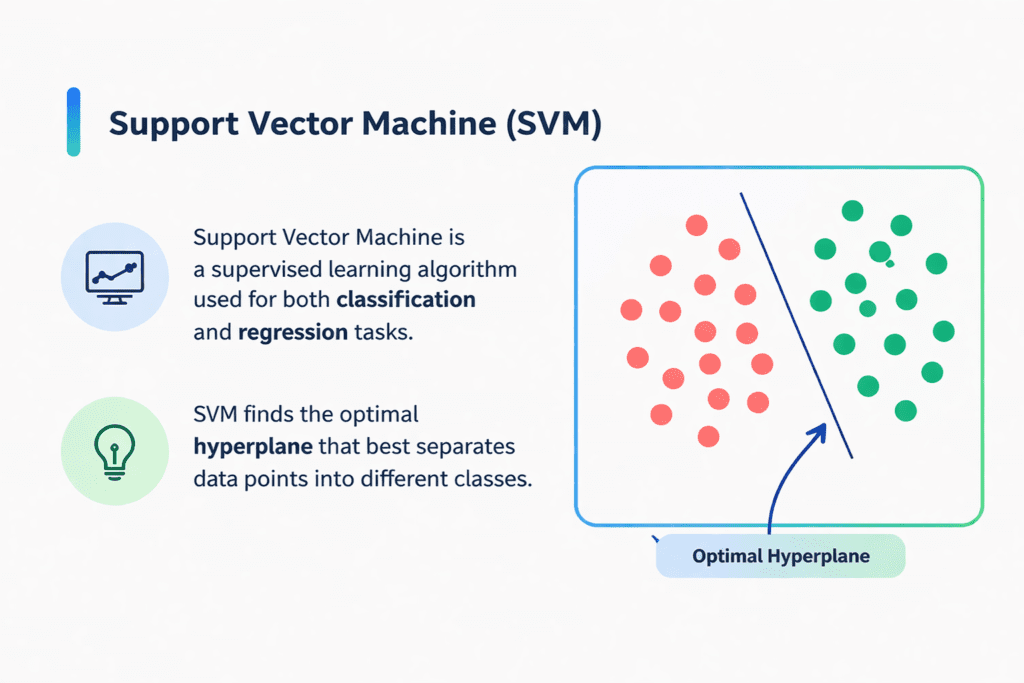

Support Vector Machine (SVM) is a powerful supervised machine learning algorithm used for both classification and regression problems. Its primary objective is to identify the most optimal boundary, known as a hyperplane, that separates different classes in a dataset.

SVM is widely used in binary classification tasks, such as:

- Spam detection (Spam vs. Not Spam)

- Image classification (Cat vs. Dog)

The key idea behind SVM is to maximize the margin, which is the distance between the separating boundary and the nearest data points from each class. A larger margin generally leads to better generalization, meaning the model performs well on unseen data.

Core Concepts of SVM #

1. Hyperplane #

A hyperplane is a decision boundary that divides the feature space into different classes.

In a linear case, it can be expressed as:

wx + b = 0

Where:

- w represents the weight vector

- b is the bias term

2. Support Vectors #

Support vectors are the data points that lie closest to the hyperplane. These points are critical because they directly influence the position and orientation of the decision boundary.

3. Margin #

The margin is the distance between the hyperplane and the nearest data points (support vectors).

SVM aims to maximize this margin, which improves the model’s robustness and reduces overfitting.

4. Kernel Trick #

Not all data can be separated using a straight line. SVM uses kernel functions to transform data into higher-dimensional space where it becomes easier to separate.

Common kernel types include:

- Linear Kernel

- Polynomial Kernel

- Radial Basis Function (RBF) Kernel

- Sigmoid Kernel

5. Hard Margin vs. Soft Margin #

- Hard Margin SVM

Assumes data is perfectly separable with no errors. It creates a boundary with zero misclassification but is sensitive to noise. - Soft Margin SVM

Allows some misclassification by introducing flexibility. It is more practical for real-world datasets that contain noise or overlapping classes.

6. Regularization Parameter (C) #

The parameter C controls the trade-off between:

- Maximizing the margin

- Minimizing classification errors

- A large C → fewer misclassifications but smaller margin (risk of overfitting)

- A small C → larger margin but more tolerance for errors (better generalization)

7. Hinge Loss Function #

SVM uses hinge loss to measure errors. It penalizes:

- Incorrect classifications

- Points that lie within the margin

This helps the model focus on difficult or borderline cases.

8. Dual Optimization Problem #

Instead of solving the problem directly, SVM often uses a dual formulation involving Lagrange multipliers.

This approach:

- Makes computation more efficient

- Enables the use of kernel functions

How Does the Support Vector Machine (SVM) Algorithm Work? #

The fundamental idea behind the Support Vector Machine (SVM) algorithm is to determine a decision boundary (hyperplane) that separates data points belonging to different classes in the most optimal way.

Instead of just finding any boundary, SVM focuses on selecting the one that creates the maximum possible margin between the classes. The margin is defined as the distance between the hyperplane and the closest data points from each class, which are known as support vectors.

A larger margin generally leads to better model performance because it improves the model’s ability to generalize to new, unseen data.

Choosing the Best Hyperplane #

In many cases, multiple hyperplanes can separate two classes. However, SVM selects the optimal hyperplane—the one that maximizes the margin between the classes.

When the data is perfectly separable, this optimal boundary is called a hard margin hyperplane. It ensures:

- No misclassification of data points

- Maximum separation between classes

For example, if several possible lines (such as L1, L2, and L3) can divide the dataset, SVM will choose the one with the largest distance from the nearest points of both classes (e.g., L2).

How Does SVM Classify the Data? #

Support Vector Machine (SVM) classifies data by finding a decision boundary (hyperplane) that separates different classes while maximizing the margin between them.

In real-world datasets, it is common to encounter outliers—data points that do not follow the general pattern. For example, imagine a blue point appearing inside a group of red points. This point can be considered an outlier.

One of the strengths of SVM is its ability to handle such outliers effectively. Instead of letting a single unusual point distort the decision boundary, SVM focuses on the overall structure of the data and selects a hyperplane that still maximizes the margin.

Handling Outliers with Soft Margin #

To deal with imperfect data, SVM uses the concept of a soft margin. This approach allows:

- Some data points to fall inside the margin

- A few misclassifications if necessary

This flexibility improves the model’s ability to generalize to new data, rather than overfitting to every single training point.

Objective of SVM Optimization #

SVM does not only try to maximize the margin—it also balances this goal with minimizing classification errors.

This trade-off can be expressed as an objective:

Objective Function=margin1+λ∑penalty

Where:

- Margin term → Encourages a wider separation between classes

- Penalty term → Adds cost for misclassified or margin-violating points

- λ (lambda) → Controls the balance between these two objectives

Hinge Loss (Penalty Function) #

SVM typically uses hinge loss to measure errors:

- If a data point is correctly classified and lies outside the margin →

Loss = 0 (no penalty) - If a data point is inside the margin or misclassified →

The loss increases as the point moves further away from the correct side

This means SVM pays more attention to difficult or incorrectly classified points, helping improve the decision boundary.

Linear vs Non-Linear Classification #

So far, we have considered linearly separable data, where a straight line (or hyperplane) can divide the classes—for example, separating red and blue points using a simple boundary.

However, not all datasets are this simple.

When the data cannot be separated using a straight line:

- SVM uses the kernel trick

- It maps the data into a higher-dimensional space

- In that space, the data becomes separable using a hyperplane

What If Data Is Not Linearly Separable? #

In many practical situations, data cannot be separated using a straight line or a simple hyperplane. Such datasets are called non-linearly separable, where classes overlap or form complex patterns that a linear model cannot divide effectively.

To address this challenge, Support Vector Machine (SVM) uses a powerful method known as the kernel trick.

Understanding the Kernel Trick #

The kernel trick allows SVM to transform data from its original feature space into a higher-dimensional space, where it becomes easier to separate the classes using a linear boundary.

Instead of explicitly calculating the coordinates in this higher dimension (which would be computationally expensive), SVM uses a kernel function to compute the relationships between data points directly. This makes the process efficient while still achieving complex transformations.

In simple terms:

- Data is not separable in its original form

- A kernel function is applied

- Data is implicitly mapped to a higher dimension

- A linear hyperplane is used to separate it in that new space

What Is a Kernel Function? #

A kernel is a mathematical function that measures similarity between data points in a transformed space without actually computing their new coordinates.

This approach allows SVM to:

- Handle complex, non-linear relationships

- Build flexible decision boundaries

- Work efficiently even with high-dimensional data

Types of Common Kernels #

1. Linear Kernel #

- Used when the dataset is already linearly separable

- Fast and simple

- Suitable for large datasets with many features

2. Polynomial Kernel #

- Transforms data into a higher-dimensional polynomial feature space

- Can model curved relationships between variables

- Controlled by degree (higher degree → more complex boundary)

3. Radial Basis Function (RBF) Kernel #

- One of the most widely used kernels

- Measures similarity based on the distance between points

- Creates smooth and flexible decision boundaries

In this case, a new feature can be interpreted as a function of distance (for example, distance from a central point), helping to separate data that was previously mixed.

Conceptual Example #

Imagine data points arranged in a circular pattern:

- In 2D → cannot be separated with a straight line

- After transformation → mapped into a higher dimension (e.g., 3D)

- Now → a flat hyperplane can easily divide the classes

This is how SVM converts a non-linear problem into a linear one in a transformed space.

Mathematical Formulation of Support Vector Machine (SVM) #

Let’s consider a binary classification problem where each data point belongs to one of two classes, labeled +1 and −1.

We are given a training dataset consisting of:

- Feature vectors x

- Corresponding labels y∈{+1,−1}

Equation of the Hyperplane #

In a linear SVM, the decision boundary (hyperplane) is defined as:

wTx+b=0

Where:

- w → weight vector (perpendicular to the hyperplane)

- b → bias term (controls the position of the hyperplane)

Distance from a Point to the Hyperplane #

The perpendicular distance of a data point xi from the hyperplane is:

di=∥w∥wTxi+b

Where ∥w∥ is the magnitude (Euclidean norm) of the weight vector.

Classification Rule #

Once the hyperplane is defined, classification is performed as:

y^={+1−1if wTx+b≥0if wTx+b<0

This means:

- If the result is positive → class +1

- If negative → class −1

Optimization Objective (Hard Margin SVM) #

For perfectly separable data, SVM aims to find a hyperplane that:

- Maximizes the margin

- Correctly classifies all data points

This leads to the following optimization problem:

minw,b21∥w∥2

Subject to:

yi(wTxi+b)≥1∀i=1,2,…,m

Where:

- xi → input features

- yi → class labels

- m → total number of samples

This constraint ensures that all points lie outside or on the margin boundary.

Soft Margin SVM (Handling Non-Separable Data) #

In real-world data, perfect separation is rare. To handle noise and overlap, SVM introduces slack variables ζi, allowing some violations.

The updated optimization becomes:

minw,b21∥w∥2+C∑i=1mζi

Subject to:

yi(wTxi+b)≥1−ζi,ζi≥0

Where:

- ζi → measures how much a point violates the margin

- C → regularization parameter controlling the trade-off

Insight:

- Large C → fewer errors, smaller margin (risk of overfitting)

- Small C → larger margin, more tolerance (better generalization)

Dual Formulation of SVM #

Instead of solving the problem directly, SVM often uses a dual optimization form, which introduces Lagrange multipliers αi.

The dual objective is:

maxα∑i=1mαi−21∑i=1m∑j=1mαiαjyiyjK(xi,xj)

Where:

- αi → Lagrange multipliers

- K(xi,xj) → kernel function

Only points with αi>0 become support vectors.

Decision Function Using Kernel #

After solving the dual problem, predictions are made using:

f(x)=∑i=1mαiyiK(xi,x)+b

This equation allows SVM to:

- Perform linear classification (with linear kernel)

- Handle non-linear data (using kernels like RBF or polynomial)

Bias Term Calculation #

The bias b is computed using any support vector:

b=wTxi−yi

Types of Support Vector Machine (SVM) #

Support Vector Machines can be broadly categorized based on the type of decision boundary they use to separate data. The two main types are:

1. Linear SVM #

A Linear SVM is used when the dataset can be separated using a straight line (in 2D) or a flat hyperplane (in higher dimensions).

In this case:

- The classes are clearly distinguishable

- A single boundary can divide the data without much overlap

- The algorithm finds the hyperplane that maximizes the margin between the two classes

Key Characteristics:

- Simple and fast to train

- Works well with high-dimensional data

- Less computationally expensive compared to non-linear SVM

Example:

Separating emails into spam and non-spam when features are clearly distinct.

2. Non-Linear SVM #

A Non-Linear SVM is used when the data cannot be separated by a straight line or flat hyperplane. In such cases, the relationship between features is more complex.

To handle this, SVM uses kernel functions to transform the data into a higher-dimensional space where a linear separation becomes possible.

How it works:

- Original data is mapped into a new feature space

- In that space, a linear hyperplane is found

- When mapped back, this appears as a non-linear decision boundary in the original space

Key Characteristics:

- Can handle complex and overlapping data

- Uses kernels like:

- Radial Basis Function (RBF)

- Polynomial

- Sigmoid

- More flexible but computationally heavier

Example:

Classifying data arranged in circular or spiral patterns where linear separation is not possible.

| Feature | Linear SVM | Non-Linear SVM |

|---|---|---|

| Boundary Type | Straight line / hyperplane | Curved / complex boundary |

| Data Requirement | Linearly separable data | Non-linear data |

| Complexity | Low | Higher |

| Speed | Faster | Slower |

| Kernel Usage | Not required | Required |