“Machine learning is not one single thing. It is an entire toolbox. Some tools need a teacher. Some tools learn by playing games. Some tools figure out patterns on their own. The secret to success is knowing which tool to pick for your problem.”

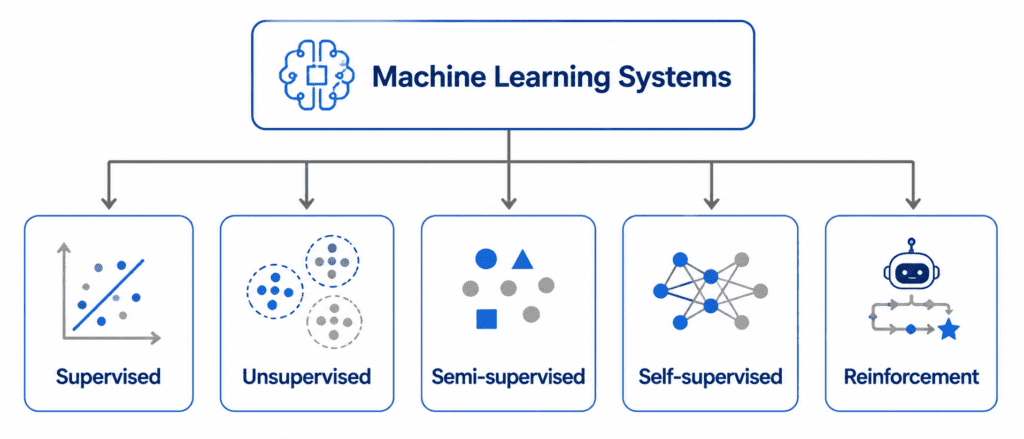

Types of Machine Learning Systems #

How We Classify ML Systems #

Machine learning systems can be divided into three main categories. Think of these as three different questions you ask about your system:

| Question | Category |

|---|---|

| How much human help does it get? | Training Supervision |

| Can it learn from new data on the fly? | Batch vs Online Learning |

| How does it make predictions? | Instance-based vs Model-based Learning |

Let us explore each question in detail.

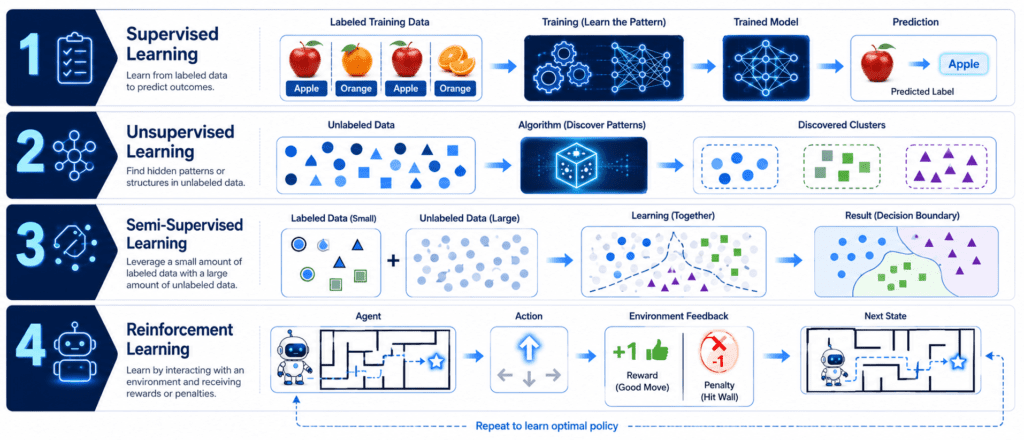

Training Supervision (How Much Human Help?) #

This is the most important way to classify ML systems. It asks: “Does the model have a teacher, or does it figure things out alone?”

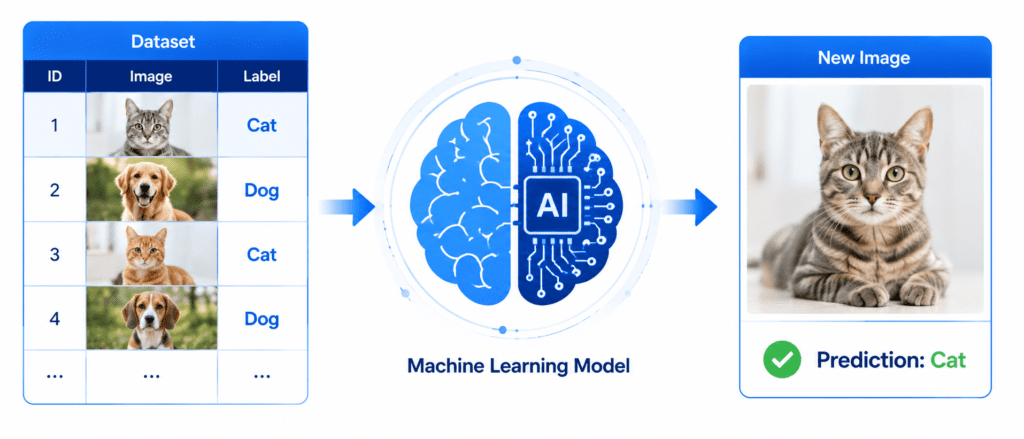

1. Supervised Learning #

The Core Idea: The model learns from labeled data. Labeled means the correct answer is provided for every training example.

How It Works Step by Step:

- You collect a dataset where each example has both input features AND the correct output

- You feed this labeled dataset to the model

- The model looks for patterns that connect inputs to outputs

- The model creates a mathematical mapping function

- When you give the model new, unseen data, it applies that mapping to predict the output

Real-World Example You See Every Day:

Your email spam filter. When you mark an email as “Spam,” you are giving the model a label. The model sees thousands of emails already labeled “Spam” or “Not Spam.” It learns that emails containing words like “lottery,” “million dollars,” or “click here” are usually spam. When a new email arrives, the model confidently predicts “Spam” or “Inbox” based on what it learned from the labeled examples.

Another Real Example:

House price prediction. You give the model data about houses: size in square feet, number of bedrooms, location, age of the house. You also provide the actual price each house sold for. The model learns that bigger houses with more bedrooms in good locations are more expensive. Later, when you give it a new house with only the features (no price), it predicts the price accurately.

Two Main Types of Supervised Learning Tasks:

| Task Type | What It Predicts | Example |

|---|---|---|

| Classification | A category or class | “Is this email spam? Yes/No” |

| Regression | A number or value | “What is the house price? $450,000” |

Simple Memory Trick: Supervised = Teacher is super-vising (watching over) the learning process.

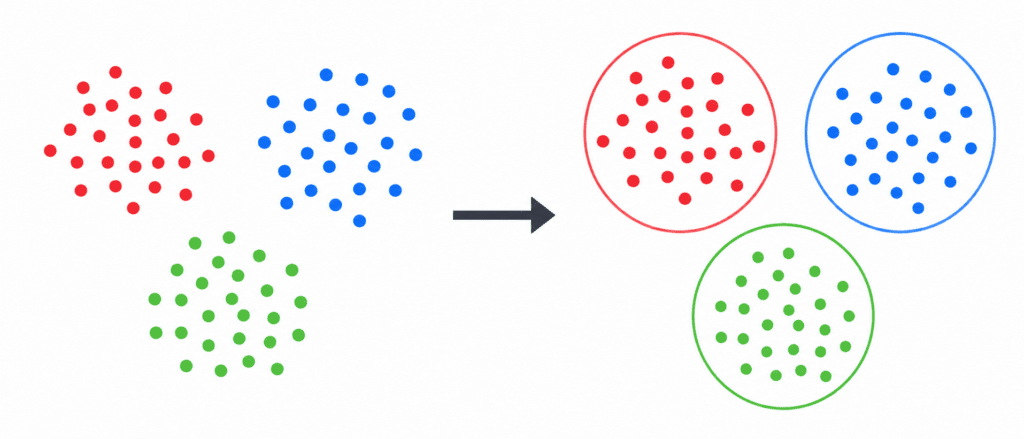

2. Unsupervised Learning #

The Core Idea: The model learns from unlabeled data. No correct answers are provided. The model must find patterns, structures, or groups entirely on its own.

How It Works Step by Step:

- You collect a dataset that has only input features (no output labels)

- You feed this unlabeled data to the model

- The model starts looking for hidden structures

- It might group similar items together (clustering)

- It might find unusual items that don’t fit (anomaly detection)

- It might simplify complex data (dimensionality reduction)

- The model never knows if it is “right” because there is no teacher to check

Real-World Example You See Every Day:

Customer segmentation on Amazon or Netflix. These companies do NOT have labels telling them “This user belongs to Group A.” Instead, their unsupervised models analyze millions of user behaviors. The model notices patterns. It might discover:

- Group 1: Users who watch romantic comedies at night

- Group 2: Users who buy baby products on weekends

- Group 3: Users who search for action movies on Friday nights

Amazon then uses these automatically discovered groups to recommend products. No human labeled any user. The model found these patterns alone.

Another Real Example:

News grouping on Google News. Every day, millions of news articles are published. An unsupervised model reads all these articles and groups them by topic automatically. Articles about sports go together. Articles about politics go together. Articles about technology go together. The model does this without any human telling it which article belongs to which category.

Common Unsupervised Learning Tasks:

| Task | What It Does | Real Example |

|---|---|---|

| Clustering | Groups similar items together | Customer segmentation |

| Anomaly Detection | Finds unusual patterns | Credit card fraud detection |

| Dimensionality Reduction | Simplifies complex data | Image compression |

Simple Memory Trick: Unsupervised = No supervisor watching. The model is unsupervised, meaning it has no teacher.

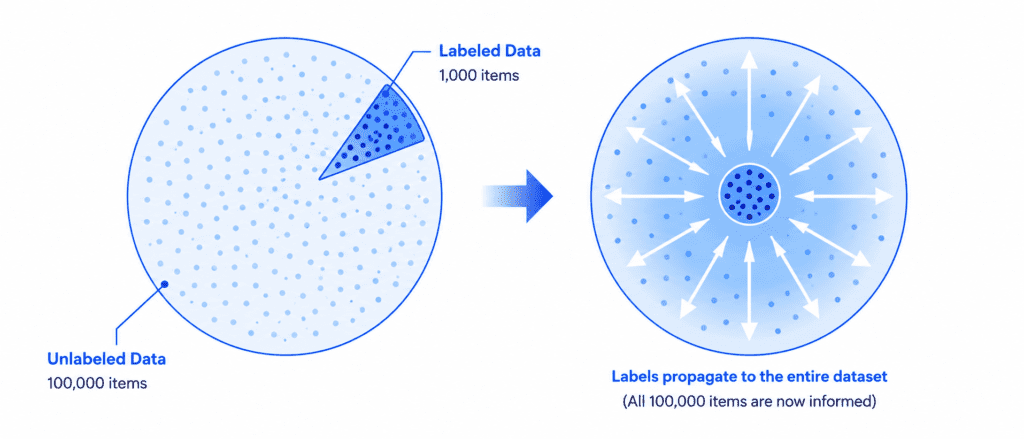

3. Semi-supervised Learning #

The Core Idea: A mix of supervised and unsupervised learning. You have a small amount of labeled data and a large amount of unlabeled data.

Why This Exists:

Labeling data is expensive and time-consuming. If you need 1 million labeled images to train a model, you might pay a team of human labelers thousands of dollars. But if you can train a model using only 10,000 labeled images plus 990,000 unlabeled images, you save massive amounts of money and time.

How It Works Step by Step:

- First, the model uses the small labeled dataset to understand the basics

- Then, the model applies unsupervised learning on the large unlabeled dataset

- It creates clusters or groups within the unlabeled data

- It uses the labeled examples to “name” those clusters

- The result: Almost all data becomes effectively labeled with very little human effort

Real-World Example You See Every Day:

Google Photos face tagging. Here is what happens behind the scenes:

- Step 1 (Unsupervised): Google Photos scans all your photos and finds all faces. It groups similar faces together automatically. It does not know who these people are yet.

- Step 2 (Semi-supervised): You click on one group and type the label “Sarah.”

- Step 3: The algorithm immediately applies the label “Sarah” to every single photo in that group, possibly hundreds of photos.

- Result: You labeled one photo. The model labeled 500 photos for you.

Another Real Example:

Medical image analysis. Hospitals have millions of X-ray and MRI scans (unlabeled) but only a few thousand that have been reviewed by doctors (labeled). Semi-supervised learning allows the model to learn from the massive pool of unlabeled scans while using the doctor-reviewed scans as guidance.

The Big Advantage:

| Approach | Labels Needed | Cost | Accuracy |

|---|---|---|---|

| Pure Supervised | 1,000,000 | Very High | Very High |

| Semi-supervised | 10,000 | Low | Almost as High |

4. Self-supervised Learning #

The Core Idea: The model creates its own labels from the unlabeled data itself. You do not provide labels. The data generates the labels automatically.

How It Works Step by Step:

- You take unlabeled data (like text, images, or videos)

- You hide or “mask” a portion of that data

- You train the model to predict the hidden portion

- The hidden portion becomes the “label” automatically

- The model learns deep patterns about the data structure

- Later, you can fine-tune this model for your specific task

Real-World Example:

Large Language Models like ChatGPT, Gemini, and Claude. These models are first trained using self-supervised learning on massive amounts of text from the internet. Here is how it works:

- You take a sentence: “The capital of France is Paris”

- You mask a word: “The capital of France is ____”

- You train the model to predict the missing word “Paris”

- The model reads billions of sentences and learns grammar, facts, reasoning, and context

- All of this happens without any human providing labels

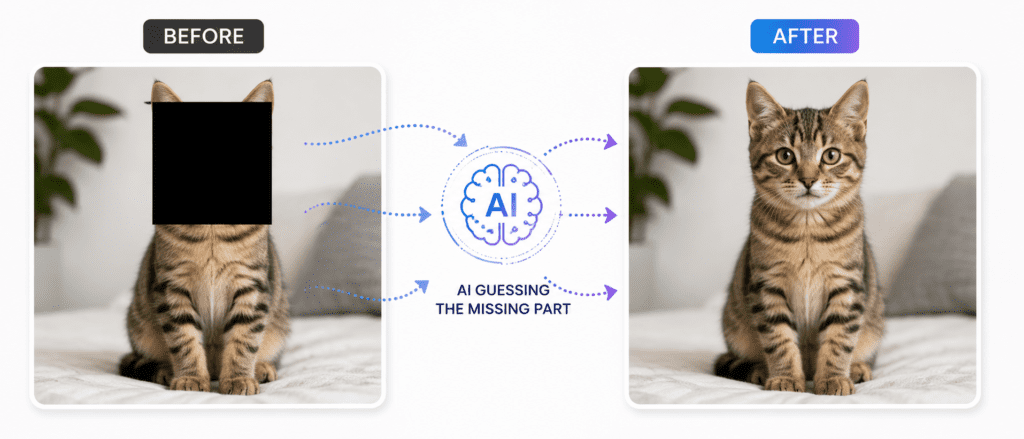

Another Real Example:

Image restoration. You take a photo of a cat. You cover 50% of the photo with a gray square. You train the model to restore the original photo. The model learns what cats look like, what backgrounds look like, and how to fill in missing information. After this self-supervised training, the model can be fine-tuned for tasks like object detection or image segmentation.

Why This Is Powerful:

| Traditional Supervised | Self-supervised |

|---|---|

| Needs humans to label data | Data creates its own labels |

| Expensive and slow | Free and fast |

| Limited to small datasets | Can use internet-scale data |

Simple Memory Trick: Self-supervised = The model gives itself homework and checks its own answers.

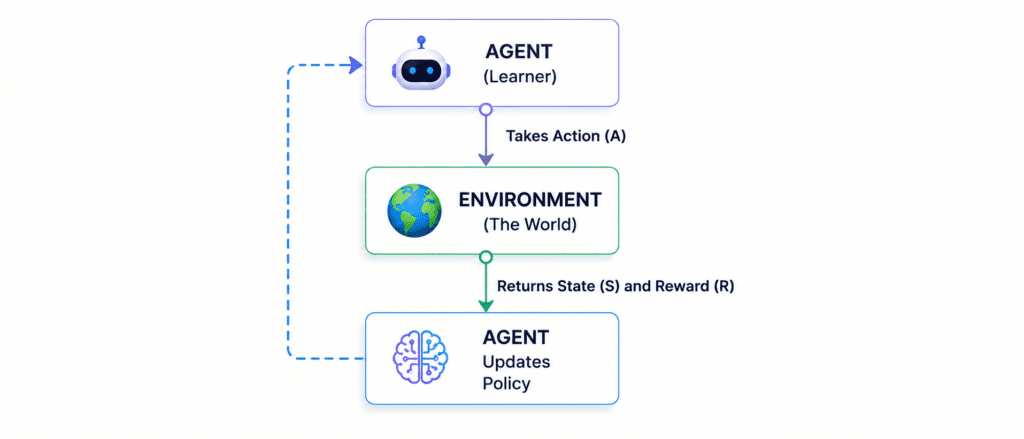

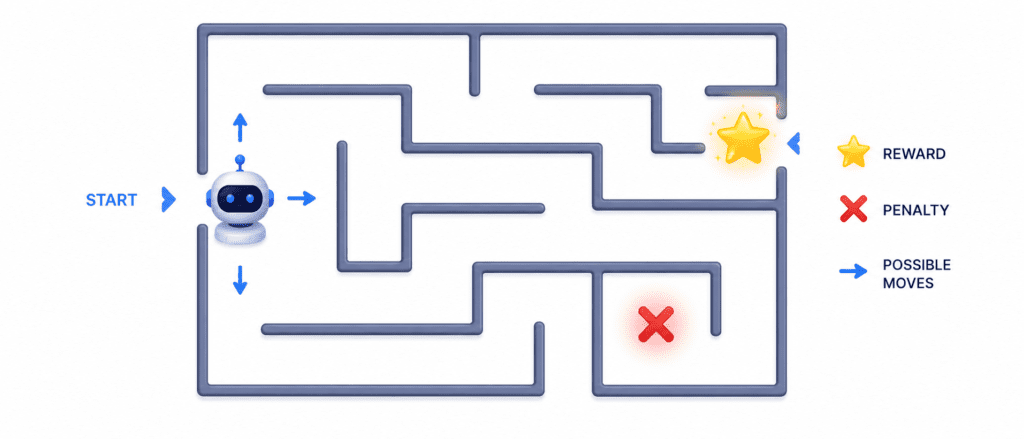

5. Reinforcement Learning #

The Core Idea: An Agent learns by interacting with an Environment. It takes actions. Good actions receive Rewards. Bad actions receive penalties. The agent learns through trial and error.

How It Works Step by Step:

- The agent observes the current state of the environment

- The agent takes an action based on its current policy (strategy)

- The environment changes based on that action

- The agent receives a reward (positive or negative)

- The agent updates its policy to get more rewards in the future

- Repeat this process millions of times

- Eventually, the agent learns the optimal strategy

Visual Flow of Reinforcement Learning:

Real-World Example You Have Heard About:

AlphaGo vs Lee Sedol (2016). AlphaGo is an AI agent. The environment is the Go board. The actions are placing stones on the board. The reward is winning the game. AlphaGo played millions of games against itself. It lost many times. It learned from each loss. Eventually, it beat the world champion. No human taught AlphaGo how to play Go at a champion level. It learned entirely through trial and error.

Another Real Example:

Self-driving cars. The agent is the car’s AI. The environment is the road. Actions include accelerating, braking, and steering. Rewards include:

- Staying in the lane: +1 point

- Avoiding collision: +10 points

- Following traffic rules: +0.5 points

- Crashing: -100 points (big penalty)

Through millions of simulated driving hours, the car learns to drive safely.

Key Terms to Remember:

| Term | Meaning |

|---|---|

| Agent | The learner or decision maker |

| Environment | The world the agent interacts with |

| Action | A move the agent can make |

| State | Current situation of the environment |

| Reward | Feedback signal (positive or negative) |

| Policy | The agent’s strategy for choosing actions |

Simple Memory Trick: Reinforcement = The model is reinforced when it does something good, like training a dog with treats.

Quick Reference Table: All 5 Supervision Types #

| Type | Labeled Data? | Human Help | Best For | Real Example |

|---|---|---|---|---|

| Supervised | ✅ Yes | High | Classification, Regression | Spam Filter |

| Unsupervised | ❌ No | None | Finding hidden patterns | Customer Groups |

| Semi-supervised | ⚠️ A Little | Medium | When labels are expensive | Google Photos |

| Self-supervised | 🏷️ Self-made | None (during pretraining) | Language Models, Images | ChatGPT |

| Reinforcement | 🎮 Rewards only | None | Game playing, Robotics | AlphaGo, Self-driving cars |