“Just because you have a hammer does not mean everything is a nail. Machine learning is a powerful tool. But using it for the wrong problem is like using a rocket launcher to open a soda bottle. It is expensive, complicated, and often destructive.”

The Golden Question #

Before you start any ML project, ask yourself one question:

“Can a simple rule or existing solution solve this problem?”

If the answer is yes, do not use ML. Save your time, money, and sanity.

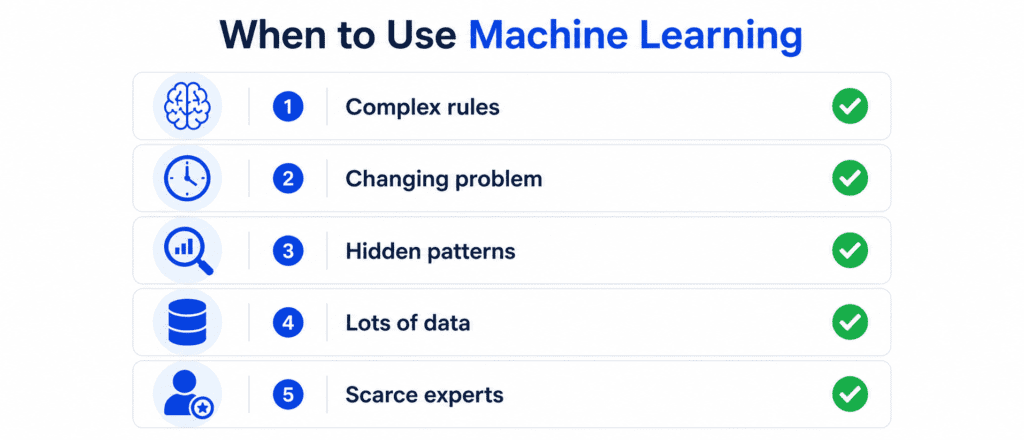

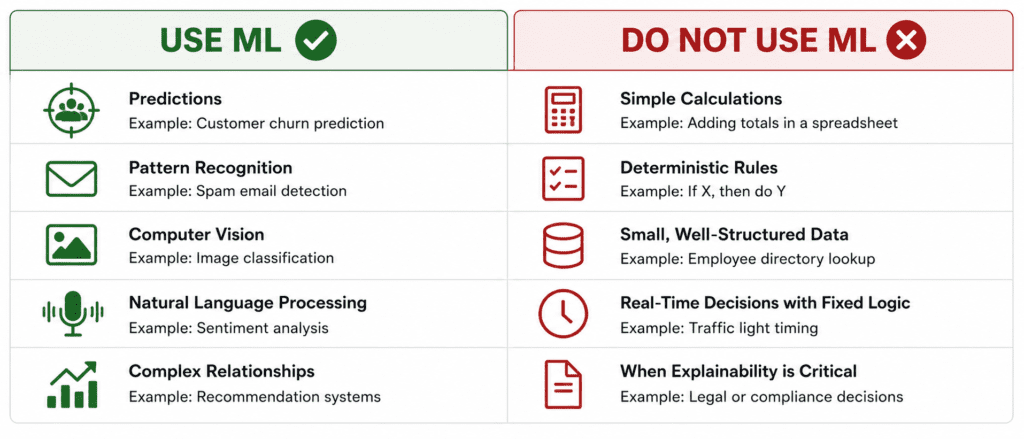

When to Use Machine Learning #

Machine learning shines in specific situations. Here are the signs that ML is the right choice.

1. The Problem is Too Complex for Traditional Rules #

The Sign: You cannot write down a clear set of step-by-step rules that works for all cases.

Example: Recognizing a cat in a photo. Try to write rules: “If it has whiskers and pointy ears, it is a cat.” But dogs have whiskers and pointy ears too. “If it says meow, it is a cat.” But cats do not always meow in photos. You cannot write enough rules. ML learns the patterns from examples.

Another Example: Speech recognition. How do you write rules to convert sound waves to text? Different accents. Different speeds. Background noise. It is impossible. ML solves this.

2. The Problem Changes Over Time #

The Sign: The environment evolves. What worked last year may not work today.

Example: Fraud detection. Fraudsters change their tactics every week. A rule-based system (“if transaction > 10,000,flagasfraud“)becomesuselessassoonasfraudstersstartdoing9,999 transactions. ML models can adapt and learn new patterns.

Another Example: Recommendation systems. User preferences change. What was popular last month is not popular today. ML models update continuously.

3. You Need to Find Hidden Patterns #

The Sign: You have data, but you do not know what patterns exist. You want the machine to discover them for you.

Example: Customer segmentation. You have millions of customer records. You do not know how to group them. An unsupervised ML model can find natural clusters in the data automatically.

Another Example: Anomaly detection in manufacturing. You have sensor data from machines. You do not know what “normal” looks like for all combinations of settings. ML learns the normal pattern and flags anything unusual.

4. You Have Lots of Data and Lots of Computing Power #

The Sign: Data is abundant. Labels are available (or cheap to create). You have the budget for computing resources.

Example: Image classification at Google Photos. They have billions of labeled images (users tagging their own photos). They have massive computing infrastructure. ML is the perfect fit.

Another Example: Language translation. There are billions of translated sentences online (parallel corpora). Companies like Google and DeepMind have TPU clusters. ML dominates this task.

5. Human Experts Are Scarce or Expensive #

The Sign: The task requires human judgment, but you cannot afford enough humans.

Example: Medical diagnosis in rural areas. There are not enough doctors. An ML model can provide preliminary screening, flagging high-risk cases for the few available doctors.

Another Example: Legal document review. Reviewing thousands of pages for specific clauses is tedious and expensive. ML can highlight relevant sections for human review.

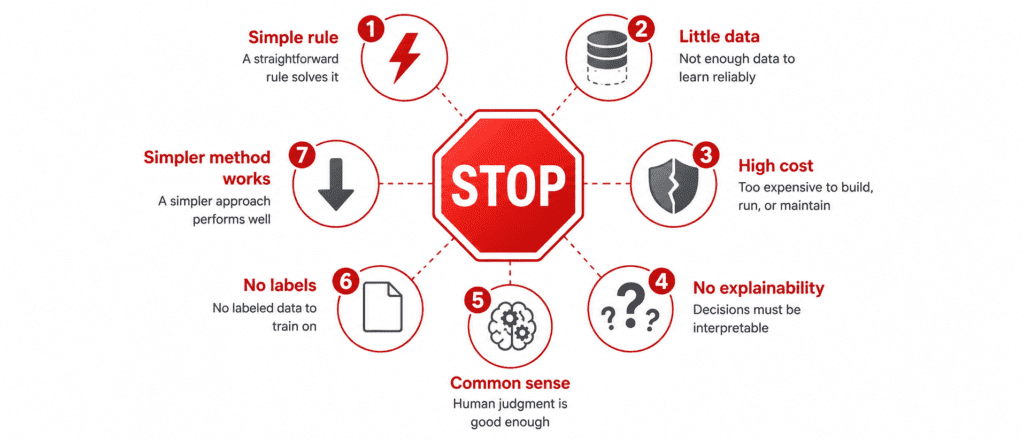

Part 2: When NOT to Use Machine Learning #

This is equally important. Many projects fail because people use ML when they should not.

1. You Can Solve It with a Simple Rule #

The Sign: An if-else statement or a simple calculation works perfectly.

Example: Calculating tax based on income. “If income < 10,000,tax=010,000 and $50,000, tax = 10%.” This is a simple rule. Do not train a neural network for this.

Another Example: Validating a form. “If email contains @ and ., it is valid.” Simple rule. No ML needed.

The Cost of Using ML Here: You will spend weeks collecting data, training models, and debugging. The simple rule works instantly, never fails, and anyone can understand it.

2. You Have Very Little Data #

The Sign: You have less than a few hundred examples per category.

Example: You want to classify rare diseases. You have only 50 cases of the disease. An ML model will not learn anything useful. It will memorize the 50 examples and fail on new cases.

The Solution: Do not use ML. Consult human experts. Or collect more data first.

General Rule of Thumb:

| Data Size | ML Recommended? |

|---|---|

| < 100 examples | ❌ No (not enough) |

| 100 – 1,000 examples | ⚠️ Maybe (with simple models, careful validation) |

| 1,000 – 100,000 examples | ✅ Yes (for most problems) |

| > 100,000 examples | ✅ Yes (deep learning becomes viable) |

3. The Cost of Failure is Extremely High #

The Sign: A wrong prediction could cause death, massive financial loss, or serious harm.

Example: Self-driving car decisions. If the car makes a mistake, people die. ML models are not perfect. They make errors. In safety-critical applications, you need multiple layers of verification, redundancy, and human oversight.

Another Example: Nuclear reactor control. You cannot have a model occasionally deciding to increase temperature because it “thinks” it is safe. Traditional control systems are deterministic and verifiable. ML is probabilistic.

Important Caveat: ML can still be used as an assistant or warning system. But it should not be the sole decision maker in high-stakes situations.

4. You Need Perfect Explainability (Right Now) #

The Sign: Regulations or stakeholders require you to explain exactly why every decision was made.

Example: Loan approval. Banks in many countries are required to explain why a loan was rejected. “Your income was too low” is acceptable. “A complex neural network with 1 million parameters said no” is not acceptable.

Another Example: Medical diagnosis. A doctor will not trust a model that says “cancer” without explaining why. Simple models (decision trees, linear regression) can provide explanations. Deep learning models are black boxes.

The Exception: Explainable AI (XAI) is an active research field. Techniques like LIME and SHAP can explain predictions. But they are imperfect. If you need perfect explainability today, stick with simple models or no ML.

5. The Problem Requires Common Sense or Physical Reasoning #

The Sign: A five-year-old human can solve it easily, but it requires understanding of the physical world.

Example: “Will this tower of blocks fall if I remove the bottom block?” Humans know the answer instantly. ML models struggle because they do not understand physics, gravity, or common sense.

Another Example: “Is this refrigerator door properly closed?” You can see a small gap. ML models trained on images might miss it because they focus on the overall picture, not the small gap.

Current State: This is changing. Large language models and multimodal models are getting better. But in 2024-2025, common sense reasoning is still a challenge for ML.

6. You Have No Labeled Data and Cannot Get It #

The Sign: You have raw data, but no one can tell you what the correct output should be.

Example: Predicting customer churn. You have customer data. But you do not know which customers actually left (churned) because you never tracked it. You cannot train a supervised model.

Your Options:

- Use unsupervised learning (clustering, anomaly detection) if that solves your problem

- Collect the labels (may be expensive but necessary)

- Do not use ML

7. A Simpler Statistical Method Works Better #

The Sign: The relationship in your data is simple and linear.

Example: Predicting ice cream sales based on temperature. A simple linear regression (y = mx + b) works perfectly. You do not need a deep neural network with 5 hidden layers.

Another Example: A/B testing results. You do not need ML to tell you which version of a website had a higher click-through rate. A simple t-test works.

The Principle: Start simple. Only increase complexity when simple methods fail.

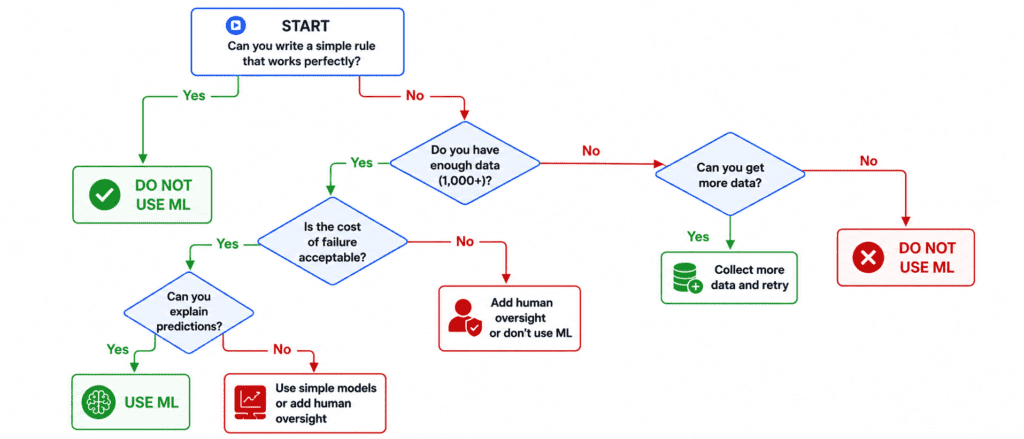

Part 3: Decision Flowchart #

Use this flowchart to decide whether to use ML for your problem.

Part 4: Cost-Benefit Analysis of Using ML #

Before starting any ML project, do this simple calculation.

The Costs of ML #

| Cost Category | What It Includes | Typical Range |

|---|---|---|

| Data Collection | Buying data, scraping, sensors | 0−100,000+ |

| Data Labeling | Human annotators, tools | 0.01−10 per label |

| Compute Resources | GPUs, cloud instances, electricity | 100−10,000+ per month |

| Engineering Time | Data scientists, ML engineers | 50−500 per hour |

| Infrastructure | Serving, monitoring, storage | 100−5,000 per month |

| Maintenance | Retraining, updates, fixes | 20-50% of initial cost per year |

The Benefits of ML #

| Benefit Category | What It Includes | How to Measure |

|---|---|---|

| Increased Revenue | Better recommendations, targeted ads | Dollars earned |

| Cost Reduction | Automation, fewer manual reviews | Dollars saved |

| Time Saved | Faster decisions, less human effort | Hours per week |

| Improved Accuracy | Fewer errors than humans | Error rate reduction |

| Scale | Handle millions of requests | Requests per second |

| New Capabilities | Things humans cannot do | Strategic value |

The Simple Formula #

Only use ML if:

(Expected Benefits) > (Total Costs + Risk of Failure)

Example Calculation:

You want to build a spam filter for a small company with 100 employees.

| Cost Item | Amount |

|---|---|

| Data labeling (10,000 emails) | $500 |

| Engineer time (2 weeks) | $8,000 |

| Compute and serving (per year) | $1,200 |

| Total First Year Cost | $9,700 |

| Benefit Item | Amount |

|---|---|

| Time saved for 100 employees @ $30/hr | $60,000 |

| Reduced risk of phishing attacks | $10,000 |

| Total Annual Benefit | $70,000 |

Verdict: Use ML. Benefits far outweigh costs.

Counter Example:

You want to build a model to predict which of your 50 sales emails will get a reply.

| Cost Item | Amount |

|---|---|

| Data collection (500 emails) | $0 (you have them) |

| Data labeling (500 emails) | $500 |

| Engineer time (1 week) | $4,000 |

| Total Cost | $4,500 |

| Benefit Item | Amount |

|---|---|

| Time saved for 2 salespeople @ $50/hr | $1,000 per year |

| Total Annual Benefit | $1,000 |

Verdict: Do NOT use ML. You will lose $3,500 in the first year. A simple rule (if email contains “unsubscribe”, ignore) works almost as well.

Part 5: Real-World Examples – ML vs No ML #

Example 1: Predicting House Prices #

| Factor | Assessment |

|---|---|

| Can you write simple rules? | No. Too many factors interact |

| Enough data? | Yes (thousands of sales records) |

| Cost of failure? | Low (estimate off by $10,000) |

| Need explainability? | Not really (agents just need a number) |

Verdict: ✅ USE ML

Example 2: Calculating Employee Salary Based on Years of Experience #

| Factor | Assessment |

|---|---|

| Can you write simple rules? | Yes. Salary = Base + (Years × Increment) |

| Enough data? | Irrelevant |

| Cost of failure? | Low |

| Need explainability? | Yes (employees ask “why this salary?”) |

Verdict: ❌ DO NOT USE ML. Use a simple formula or lookup table.

Example 3: Detecting Credit Card Fraud #

| Factor | Assessment |

|---|---|

| Can you write simple rules? | No. Fraud patterns are complex and changing |

| Enough data? | Yes (millions of transactions) |

| Cost of failure? | Medium (false positives annoy customers) |

| Need explainability? | Not really (bank can investigate after flag) |

Verdict: ✅ USE ML

Example 4: Approving or Rejecting a Mortgage #

| Factor | Assessment |

|---|---|

| Can you write simple rules? | Yes. Rules exist (income, credit score, debt ratio) |

| Enough data? | Yes |

| Cost of failure? | Very high (rejecting qualified applicants = lost revenue, accepting unqualified = defaults) |

| Need explainability? | Yes (legal requirement) |

Verdict: ⚠️ USE ML WITH CAUTION. Use simple, explainable models (logistic regression, decision trees). Add human review for borderline cases. Do not use black-box deep learning.

Example 5: Recommending Movies on Netflix #

| Factor | Assessment |

|---|---|

| Can you write simple rules? | No. Preferences are personal and complex |

| Enough data? | Yes (billions of viewing events) |

| Cost of failure? | Low (bad recommendation? user watches something else) |

| Need explainability? | No (user just wants good suggestions) |

Verdict: ✅ USE ML

Example 6: Sorting Packages by Size on a Conveyor Belt #

| Factor | Assessment |

|---|---|

| Can you write simple rules? | Yes. If width > X, send to left. If height > Y, send to right. |

| Enough data? | Irrelevant |

| Cost of failure? | Low (wrong chute? manual fix) |

Verdict: ❌ DO NOT USE ML. A simple sensor and if-else logic works perfectly, runs 100x faster, and costs nothing to maintain.

Example 7: Translating Customer Support Chats #

| Factor | Assessment |

|---|---|

| Can you write simple rules? | No. Language is too complex for rules |

| Enough data? | Yes (millions of translated sentences exist) |

| Cost of failure? | Medium (mistranslation causes confusion) |

| Need explainability? | No (user just wants to understand) |

Verdict: ✅ USE ML

Example 8: Deciding Whether to Send a Marketing Email #

| Factor | Assessment |

|---|---|

| Can you write simple rules? | Yes. If user opted in AND hasn’t unsubscribed AND has been active in last 30 days, send. |

| Enough data? | Irrelevant |

| Cost of failure? | Low (annoying email, lose a subscriber) |

Verdict: ❌ DO NOT USE ML. The rule is simple and perfect.

Part 6: Quick Reference Table #

| Scenario | Use ML? | Why |

|---|---|---|

| Image recognition | ✅ Yes | Too complex for rules |

| Speech recognition | ✅ Yes | Too complex for rules |

| Spam detection | ✅ Yes | Patterns change constantly |

| Fraud detection | ✅ Yes | Patterns change constantly |

| Recommendation systems | ✅ Yes | User preferences are complex |

| Language translation | ✅ Yes | Too complex for rules |

| Anomaly detection | ✅ Yes | “Normal” is hard to define |

| Customer segmentation | ✅ Yes | Hidden patterns to discover |

| Tax calculation | ❌ No | Simple rules work |

| Form validation | ❌ No | Simple rules work |

| Sorting by size/weight | ❌ No | Simple rules work |

| A/B test analysis | ❌ No | Statistical test is simpler |

| Salary calculation | ❌ No | Simple formula works |

| Mortgage approval (simple) | ⚠️ Maybe | Use simple, explainable models |

| Self-driving decisions | ⚠️ With caution | High risk, need redundancy |

| Medical diagnosis | ⚠️ As assistant | Human oversight required |

Part 7: Common Mistakes (And How to Avoid Them) #

Mistake 1: ML for Everything #

The Problem: You learned ML. Now every problem looks like an ML problem.

The Fix: Ask yourself “What is the simplest possible solution?” If the simplest solution works, use it. ML is a last resort, not a first resort.

Mistake 2: Ignoring Data Quality #

The Problem: You collect data without checking if it is clean, representative, or sufficient.

The Fix: Spend 80% of your time on data exploration and cleaning. If the data is bad, no ML model will save you.

Mistake 3: Underestimating Maintenance Costs #

The Problem: You think the work ends when the model is deployed.

The Fix: Budget for monitoring, retraining, and updates. ML systems are living systems. They need care.

Mistake 4: Overpromising #

The Problem: You tell stakeholders the model will be 99% accurate. It achieves 85%.

The Fix: Be honest about limitations. ML models make mistakes. Set realistic expectations from day one.

Mistake 5: Ignoring Simple Baselines #

The Problem: You spend weeks building a complex neural network without checking if a simple model works.

The Fix: Always start with the simplest possible baseline. Linear regression. Constant prediction. Random guessing. Only add complexity when the baseline is beaten.