“Your model is performing badly. But why? Is it too simple? Too complex? Not enough data? More training needed? Learning curves answer all these questions with just two lines on a graph.”

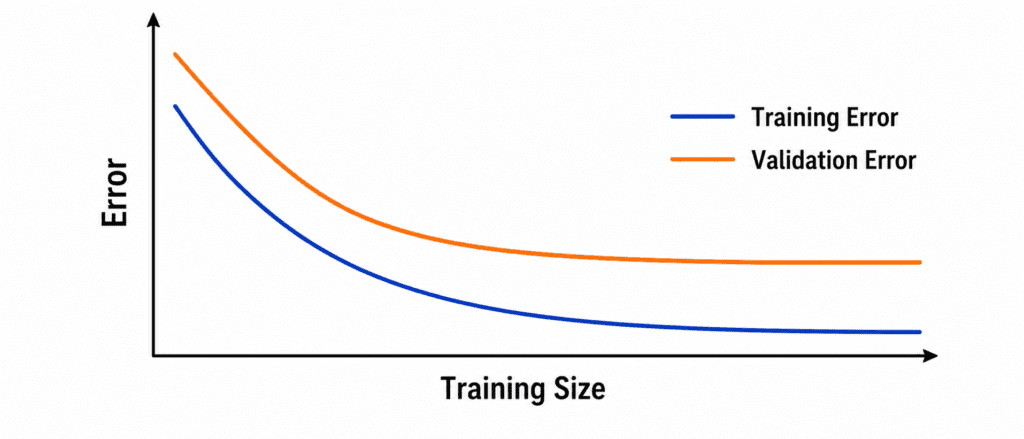

What Are Learning Curves? #

Two lines plotted against training experience.

X-axis: Training set size (or number of training iterations)

Y-axis: Error (or accuracy)

Two lines:

- Blue line = Performance on training data

- Orange line = Performance on validation data

That is it. Two lines. They tell you everything wrong with your model.

The Three Patterns #

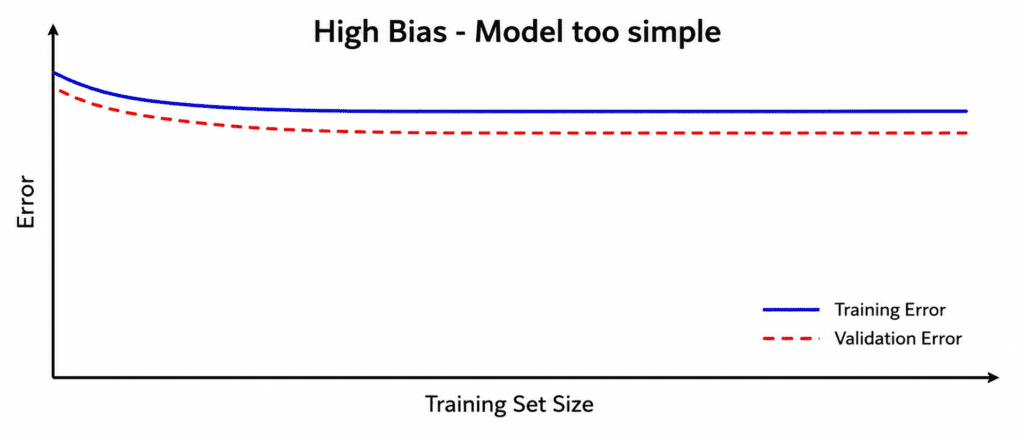

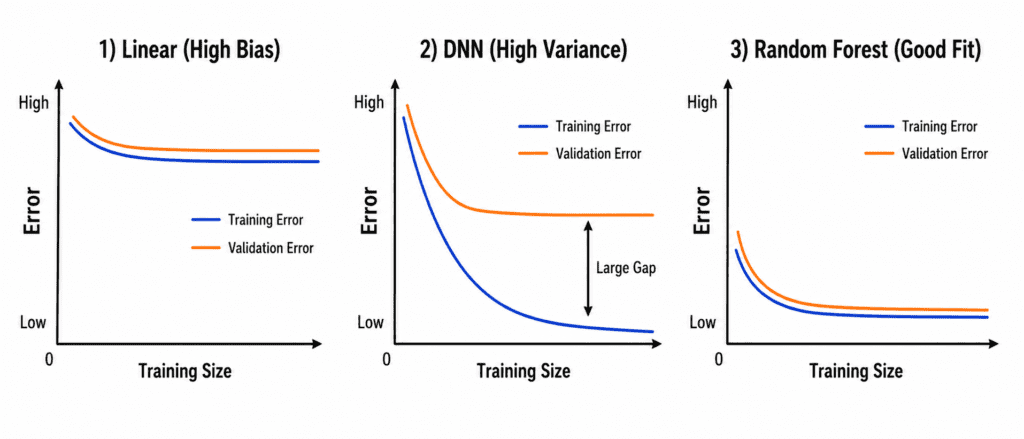

Pattern 1: High Bias (Underfitting) #

What you see:

- Training error is high

- Validation error is also high

- Both lines are close to each other

- Neither line reaches good performance

Why this happens:

Model is too simple. Like trying to fit a straight line through a circle. No matter how much data you give, it will never learn.

The fix:

- Use a more complex model

- Add more features

- Reduce regularization

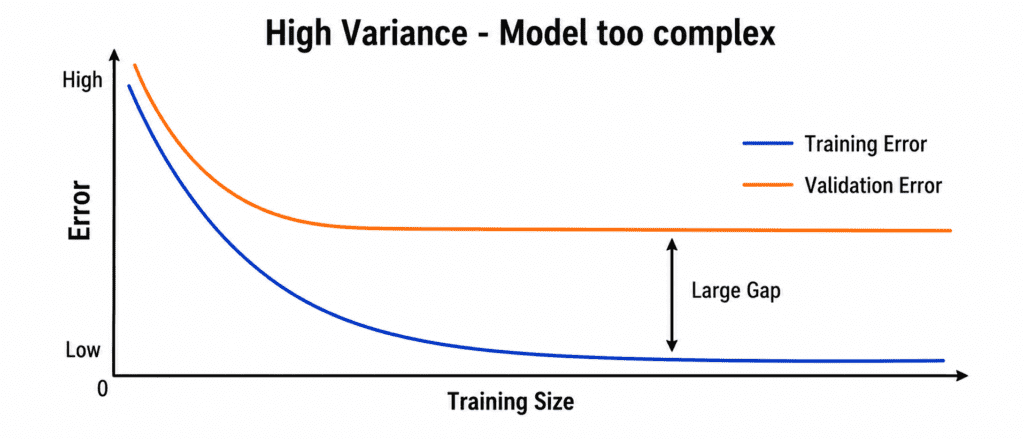

Pattern 2: High Variance (Overfitting) #

What you see:

- Training error is very low (close to zero)

- Validation error is high

- Large gap between the two lines

- Gap gets wider as training progresses

Why this happens:

Model is too complex. It memorizes the training data but cannot generalize to new data.

The fix:

- Get more training data

- Simplify the model

- Add regularization

- Early stopping

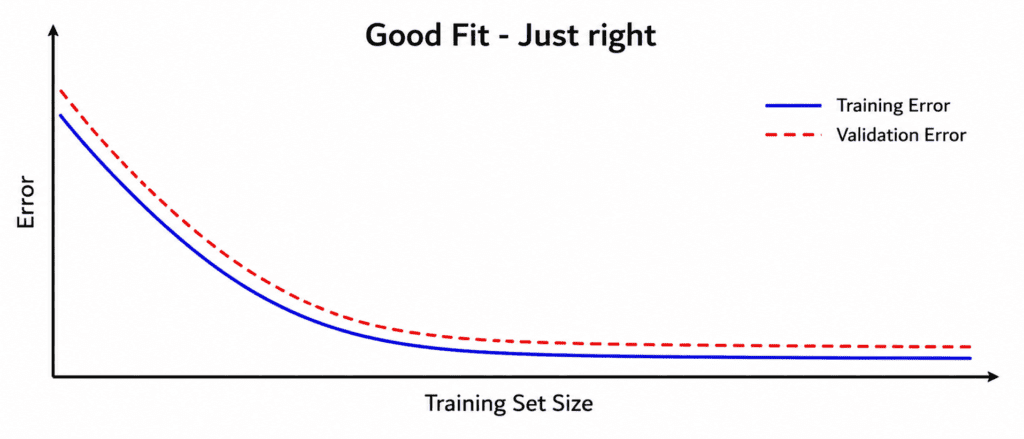

Pattern 3: Good Fit #

What you see:

- Training error is low

- Validation error is also low

- Small gap between lines

- Both lines flatten at good values

This is what you want.

What Learning Curves Tell You #

| Problem | Training Error | Validation Error | Gap |

|---|---|---|---|

| High Bias (Underfitting) | High | High | Small |

| High Variance (Overfitting) | Very Low | High | Large |

| Good Fit | Low | Low | Small |

One look at the graph. Instant diagnosis.

More Data Will Help Only One Problem #

If you have High Variance (Overfitting):

More data WILL help. Each new example makes it harder to memorize. Model forced to generalize.

If you have High Bias (Underfitting):

More data will NOT help. Model is too simple. It will never learn the pattern. You need a better model first.

The Ideal Learning Curve (What You Want) #

As you add more training data:

- Training error starts low and stays low

- Validation error starts higher but drops

- Both lines converge to similar low error

- Gap becomes small

If you see this, stop. Your model is ready.

Real Example #

You are building a house price predictor.

First attempt (Linear Regression):

- Training error: $80k

- Validation error: $85k

→ High Bias. Too simple.

Second attempt (Deep Neural Network with 10 layers):

- Training error: $5k

- Validation error: $70k

→ High Variance. Overfitting.

Third attempt (Random Forest with 100 trees):

- Training error: $35k

- Validation error: $38k

→ Good fit. Ready.

Code (Scikit-learn)

from sklearn.model_selection import learning_curve

train_sizes, train_scores, val_scores = learning_curve(

model, X, y, cv=5,

train_sizes=np.linspace(0.1, 1.0, 10)

)When you see a problem, ask:

| Question | If Yes | Diagnosis |

|---|---|---|

| Both errors high? | Yes | Underfitting (High Bias) |

| Large gap between lines? | Yes | Overfitting (High Variance) |

| Gap shrinking as data increases? | Yes | Need more data |

| Gap not shrinking? | Yes | Model too simple. Change model. |

| Validation error going up? | Yes | Overfitting severely. Stop training. |

Quick Quiz #

Q1: Your training error is 10%. Validation error is 50%. What is the problem?

A1: High Variance (Overfitting). Model memorized training but fails on validation.

Q2: Both errors are 45% and close together. What is the problem?

A2: High Bias (Underfitting). Model too simple. Need more complexity.

Q3: You add more data. Validation error drops from 50% to 30%. What does this tell you?

A3: You had overfitting. More data helped. Keep adding data.

Q4: You add more data. Both errors stay at 45%. What does this tell you?

A4: You have underfitting. More data will not help. Change the model.

Key Takeaways (5 Lines) #

- Learning curves = Two lines (training error, validation error) vs training size.

- High Bias (Underfitting) = Both errors high and close. Need stronger model.

- High Variance (Overfitting) = Large gap between errors. Need more data or simpler model.

- More data helps overfitting but does NOT help underfitting.

- Good fit = Both errors low and close together.