Before we dive into each role, here’s a snapshot that most hiring managers actually agree on:

| Role | Core Question | Main Output | Key Tools |

|---|---|---|---|

| Data Analyst | What happened? What should we do? | Reports, dashboards, ad-hoc insights | SQL, Excel, Pandas, Tableau |

| Data Scientist | What will happen? Why? | Predictive models, experiments, algorithms | Python (scikit-learn, TensorFlow), R, Jupyter |

| Data Engineer | How do we make data usable? | Pipelines, data warehouses, infrastructure | SQL, Python, Spark, Airflow, dbt, cloud services |

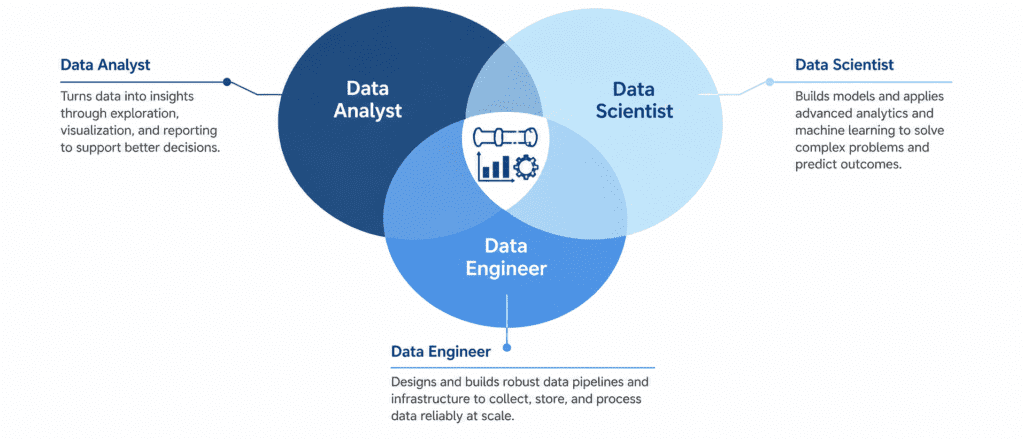

Notice something? All three revolve around data, but they operate at very different levels of abstraction. A rookie mistake is thinking one is “better” than the other — that’s like saying a chef is better than a farmer. You need all three to serve a great meal.

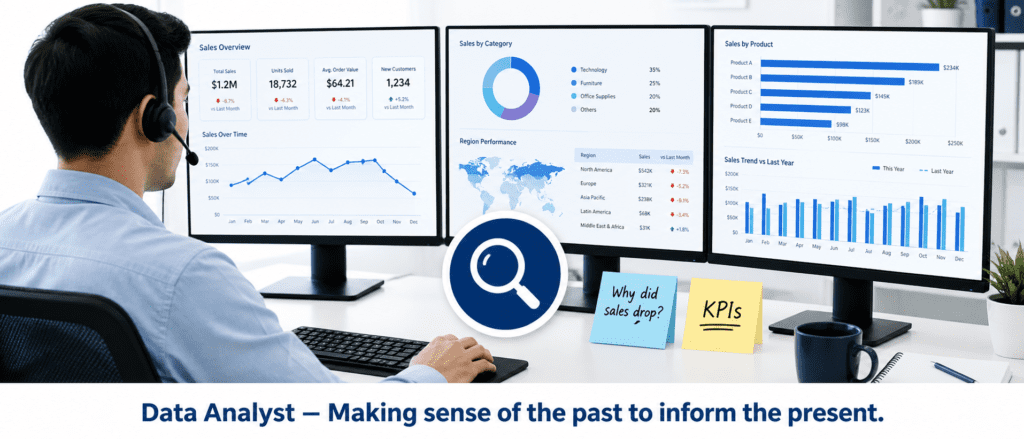

The Data Analyst: The Business’s Eyes and Ears #

A Data Analyst is the closest to the business. They answer specific, concrete questions like:

- Why did churn increase 5% last month?

- Which product category performed best in Q2?

- What’s our average customer lifetime value by region?

Day-to-day, an analyst spends a lot of time in:

- SQL – pulling data from databases.

- Excel / Google Sheets – pivot tables, VLOOKUPs, quick charts.

- Tableau / Power BI – building interactive dashboards for stakeholders.

- Python (Pandas, Matplotlib) – when Excel hits a wall or when automation is needed.

Let me show you a tiny analyst script — something you might copy directly into your WordPress Enlighter block:

import pandas as pd

# Monthly sales data (imagine you pulled this from a database via SQL)

sales = pd.DataFrame({

'month': ['Jan','Feb','Mar','Apr'],

'Electronics': [1200, 1350, 1100, 1500],

'Clothing': [800, 950, 1020, 890]

})

# Quick diagnostic: which category grew fastest from Jan to Apr?

growth = (sales.iloc[-1, 1:] - sales.iloc[0, 1:]) / sales.iloc[0, 1:] * 100

print("Percentage growth from Jan to Apr:")

print(growth.round(1))Output:

Electronics 25.0% Clothing 11.2% dtype: float64

This is classic analytical work: descriptive summary, simple arithmetic, instant answer. The analyst then writes an email or updates a slide: “Electronics grew 25%, Clothing 11% — let’s investigate the drivers.” No machine learning, just clear, decision-ready facts.

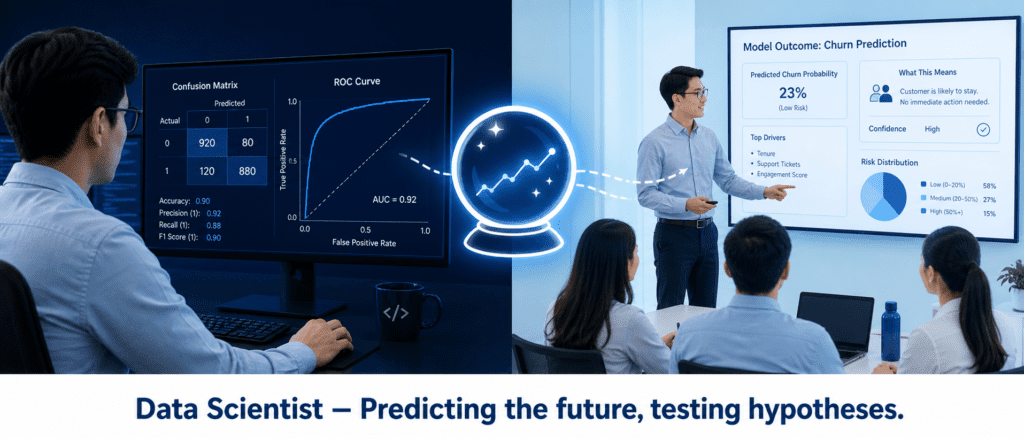

The Data Scientist: Builder of Predictive Brains #

Data Scientists take the facts the Analyst uncovered and ask “What if?” and “What’s next?” They build models that forecast, classify, or recommend. Common activities:

- Machine learning – training regression, classification, clustering models.

- Statistical analysis – A/B testing, significance testing, experimental design.

- Feature engineering – turning raw data into meaningful predictors.

- Python (scikit-learn, XGBoost, TensorFlow) or R – their main coding environment.

A data scientist might take that same sales data and build a time series forecast for the next quarter:

from sklearn.linear_model import LinearRegression

import numpy as np

# Synthetically enriched dataset: monthly sales + ad spend

months = np.array([1,2,3,4]).reshape(-1,1)

sales = np.array([1200, 1350, 1100, 1500])

ad_spend = np.array([200, 250, 180, 300])

X = np.column_stack([months.flatten(), ad_spend])

model = LinearRegression().fit(X, sales)

# Predict month 5 with ad spend of 320

pred = model.predict([[5, 320]])

print(f"Predicted sales for month 5: ${pred[0]:.2f}")Output:

Predicted sales for month 5: $1593.89

That number goes into a recommendation: “Based on the trend and if we maintain ad spend, we’d expect around $1,593.89 next month.” The scientist also checks model accuracy, residual plots, and discusses confidence intervals.

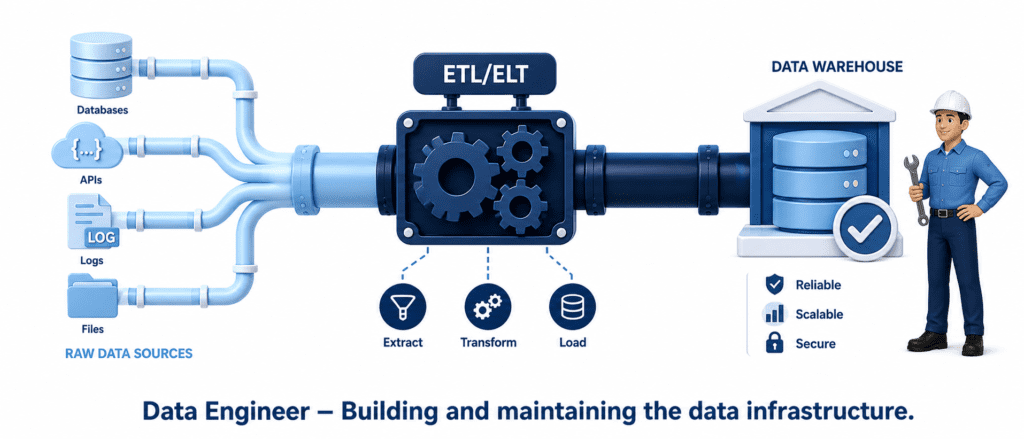

The Data Engineer: Architect of the Data Highway #

If Analysts and Scientists are the pilots and navigators, Data Engineers are the aircraft mechanics and air traffic controllers. They ensure data flows reliably, securely, and quickly from source to storage to the hands of analysts. Their world revolves around:

- Data pipelines – moving and transforming data (ETL/ELT).

- Data warehousing – structuring data for fast querying (Snowflake, BigQuery, Redshift).

- Big data tools – Spark, Hadoop, Kafka for massive scales.

- Workflow orchestration – Airflow, Prefect, Dagster.

- Languages – Python, SQL, Java/Scala.

A small taste of an engineer’s Python script could look like this — a simple extract-and-load snippet:

import pandas as pd

from sqlalchemy import create_engine

# Simulate extracting raw sales data from a CSV (in reality, from an API or database)

raw = pd.read_csv('daily_sales.csv')

# Simple transformation: add a processed_date column

raw['processed_date'] = pd.Timestamp.now().strftime('%Y-%m-%d %H:%M')

# Load into a SQLite staging table (in production, a cloud data warehouse)

engine = create_engine('sqlite:///sales.db')

raw.to_sql('sales_staging', engine, if_exists='replace', index=False)

print(f"Loaded {len(raw)} rows into sales_staging.")Output:

Loaded 2000 rows into sales_staging.

This script is dead simple, but in a real architecture, the engineer ensures it runs every hour, handles errors gracefully, scales to terabytes, and notifies the team if something breaks.

How They Collaborate: A Real-World View #

Imagine an e-commerce company launching a new loyalty program. The three roles would move like this:

- Data Engineer sets up a pipeline that collects clickstream data, transaction logs, and program sign‑ups into a clean, queryable data warehouse. Every morning, fresh data is ready.

- Data Analyst queries that warehouse, builds a dashboard showing daily enrollments, revenue per loyalty member vs. non-member, and regional adoption. They surface a curious trend: the South region has 20% lower sign‑up rates.

- Data Scientist picks up the anomaly, formulates a hypothesis — perhaps shipping delays in the South reduce trust — and builds a classification model to predict which users are likely to enroll, using features from the engineer’s pipeline. They find that prompt delivery is the strongest predictor.

- The Analyst takes the model’s insight back to the business, recommending targeted free-shipping offers in the South, while the Engineer sets up automated reporting so the team can track if the intervention works.

No single role could have done all that alone. They are a relay team, passing the baton from raw data to real impact.