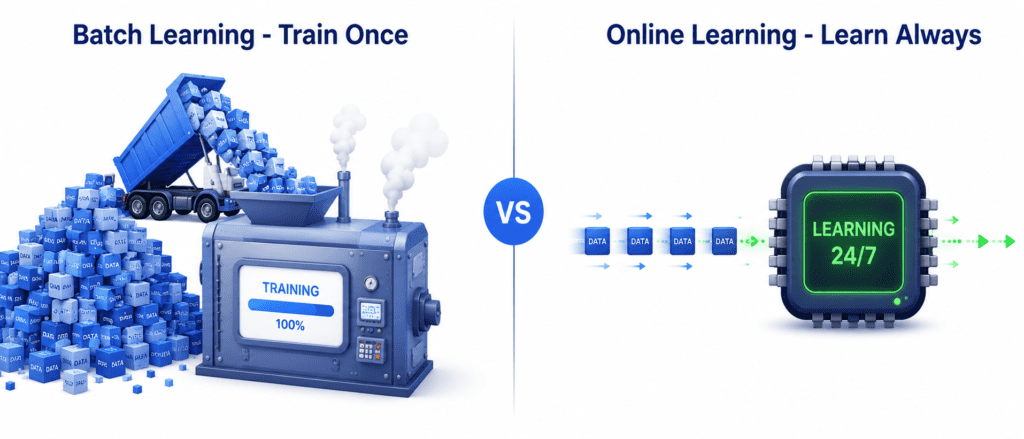

Batch vs Online Learning: The Core Question is whether the model can learn from new data on the fly or needs retraining from scratch every time.

Batch Learning (Offline Learning) #

The Core Idea: The system is trained on all available data at once. It cannot learn incrementally. Once trained and deployed, it stops learning completely.

How It Works Step by Step:

- You collect all your training data

- You train the model on this complete dataset (this takes hours or days)

- You deploy the model to production

- The model makes predictions but does NOT learn anything new

- When new data arrives, you collect it

- You combine the new data with the old data

- You retrain a completely new model from scratch

- You replace the old model with the new one

The Problem This Creates:

The world keeps changing. This is called data drift or model rot. The model that was perfect 6 months ago might be completely wrong today because user behavior, market conditions, or technology has changed.

Real-World Example:

A cat vs dog image classifier. Cats and dogs do not evolve overnight. A model trained on photos from 2020 will still work fine in 2025. However, if people start dressing their pets in costumes, the model might need retraining. But you can retrain it once a month easily.

When to Use Batch Learning:

- When the world changes slowly

- When you have powerful computing resources

- When you do not need instant adaptation

- When retraining once a day or once a week is acceptable

Pros and Cons:

| Pros | Cons |

|---|---|

| Simple to implement | Takes a long time to train |

| Easy to debug | Needs massive computing power |

| No complex infrastructure | Cannot adapt to changes quickly |

| Well understood | Must retrain from scratch for updates |

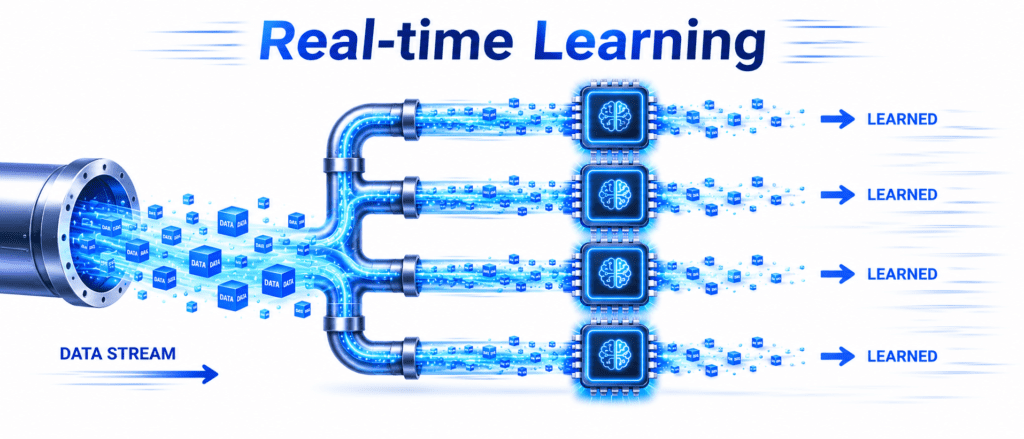

Online Learning (Incremental Learning) #

The Core Idea: The system is trained incrementally by feeding it data instances one by one or in small groups called mini-batches. It learns continuously.

How It Works Step by Step:

- New data arrives (one instance or a small batch)

- The model performs a fast, cheap learning step on this data

- The model updates itself instantly

- You can discard the data to save memory

- Repeat steps 1-4 forever

- The model continuously improves and adapts

Real-World Example:

Stock market prediction. Stock prices change every second. You cannot collect a year of stock data and retrain a model once a week. By the time training finishes, the market has already changed. Online learning allows the model to learn from each trade as it happens, adapting to market conditions in real-time.

Another Real Example:

User behavior tracking on a website. As millions of users click, scroll, and buy products, the recommendation model learns instantly. If a new trend emerges (everyone suddenly wants blue shoes), the online learning model adapts within minutes, not weeks.

Out-of-Core Learning (Special Use Case):

This is when your dataset is too big to fit in your computer’s RAM. For example, you have 10 terabytes of video data but only 16GB of RAM. Online learning saves you. You load the data in small chunks, learn from each chunk, discard it, and load the next chunk. You never run out of memory.

When to Use Online Learning:

- When data changes rapidly

- When you have limited memory (phones, sensors, embedded devices)

- When your dataset is too big to fit in RAM

- When you need instant adaptation

Pros and Cons:

| Pros | Cons |

|---|---|

| Extremely fast updates | More complex to implement |

| Uses very little memory | Sensitive to bad data |

| Adapts to changes in real-time | Requires careful monitoring |

| Can handle unlimited data | If bad data enters, model gets worse |

Important Warning:

If bad data is fed to an online learning system, its performance will decline immediately. If a sensor malfunctions and sends wrong values, the model learns those wrong values. You must monitor online learning systems closely and be ready to shut them down or revert to a backup if something goes wrong.

Comparison Table: Batch vs Online Learning #

| Feature | Batch Learning | Online Learning |

|---|---|---|

| Training Time | Hours to Days | Seconds to Minutes |

| Memory Usage | Very High | Very Low |

| Adapts to Change | ❌ No (needs retraining) | ✅ Yes (continuous) |

| Needs Retraining | ✅ Yes (from scratch) | ❌ No |

| Complexity | Simple | Moderate to High |

| Best For | Stable environments | Fast-changing environments |

| Risk | Low | Medium (bad data causes damage) |