Opening Hook #

Train Validation Test Split is like asking: “Would you let a student take the final exam using the same textbook they studied from? No. That would be cheating. Machine learning is the same. You need separate exams to know if your model actually learned or just memorized.”

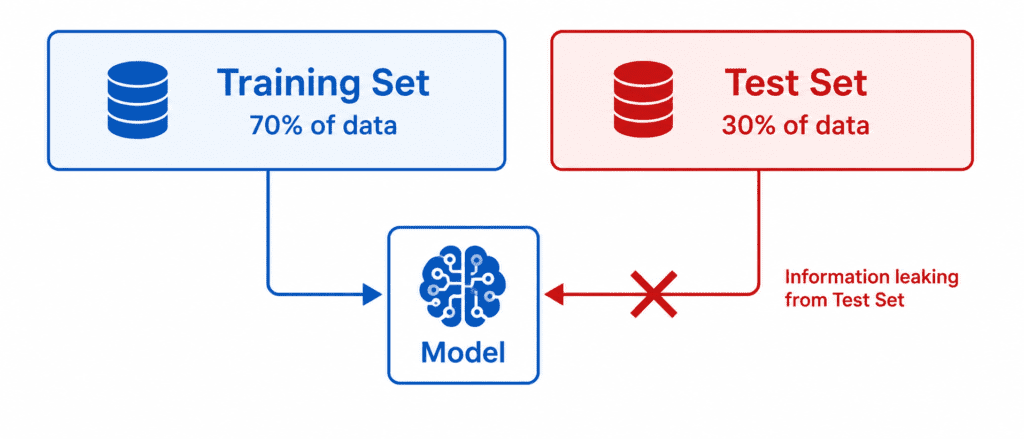

The Problem with Two Sets #

Most beginners think they need only two sets:

| Set | Purpose |

|---|---|

| Training Set | Model learns from this |

| Test Set | Final check after training |

But there is a hidden problem.

When you tune hyperparameters (like learning rate, number of trees, etc.), you are using the test set results to make decisions. You look at test score → change something → look at test score again.

This means the test set is secretly influencing your choices. It is no longer “unseen” data.

Result: Your model looks great on your test set. But fails on real new data.

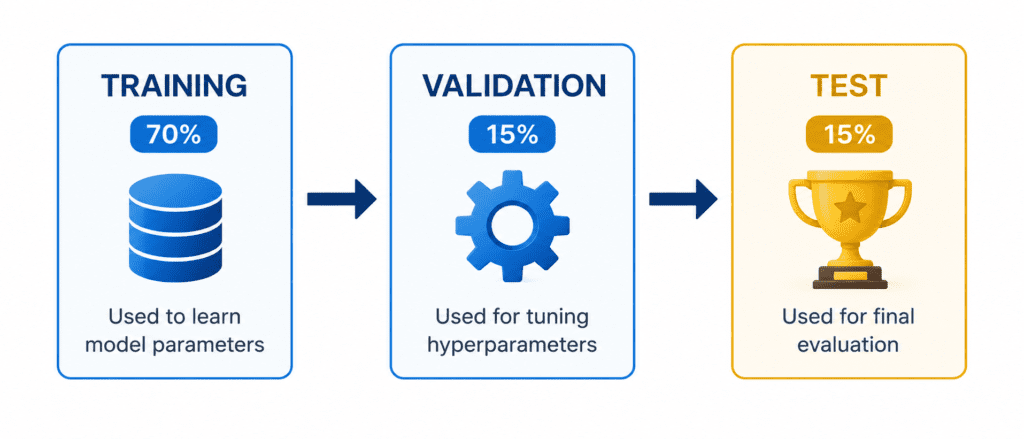

The Solution: Three Sets #

| Set | Size (typical) | Purpose | How Often Used |

|---|---|---|---|

| Training Set | 70-80% | Model learns parameters | Every epoch |

| Validation Set | 10-15% | Tune hyperparameters, compare models | After each training run |

| Test Set | 10-15% | Final honest evaluation | Once at the very end |

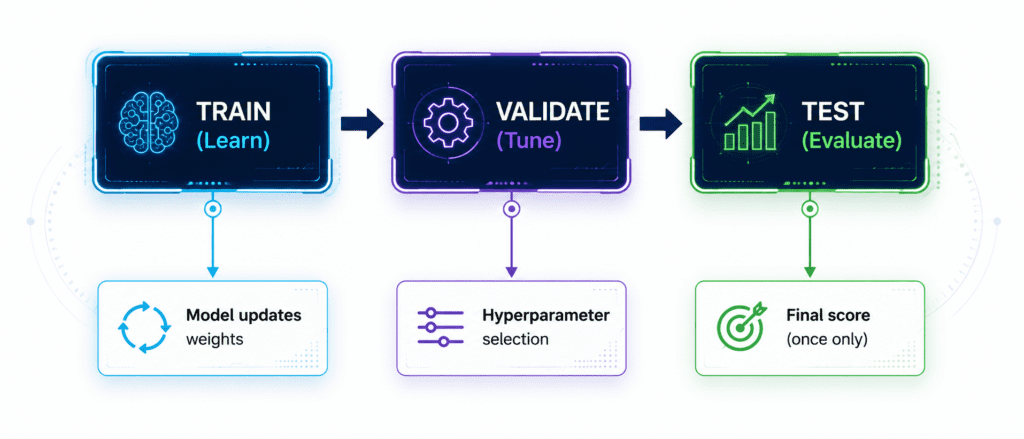

The Process Flow #

Why Three Sets Work #

Step 1: Train on Training Set

Step 2: Check performance on Validation Set

Step 3: Change hyperparameters based on validation results

Step 4: Repeat steps 1-3 many times

Step 5: Evaluate once on Test Set

Result: Test Set has never influenced any decision. It is truly unseen. The score is honest.

Real-World Analogy #

| ML Concept | School Analogy |

|---|---|

| Training Set | Homework problems (with answers) |

| Validation Set | Practice tests (used to improve study method) |

| Test Set | Final exam (seen only once) |

You would never give students the final exam answers before the exam. That is cheating. Same here.

Common Mistake #

Mistake: Tuning hyperparameters using test set, then reporting test set accuracy.

Why it is wrong: You have accidentally trained on the test set. Your score is fake.

Fix: Use validation set for tuning. Touch test set only once at the end.

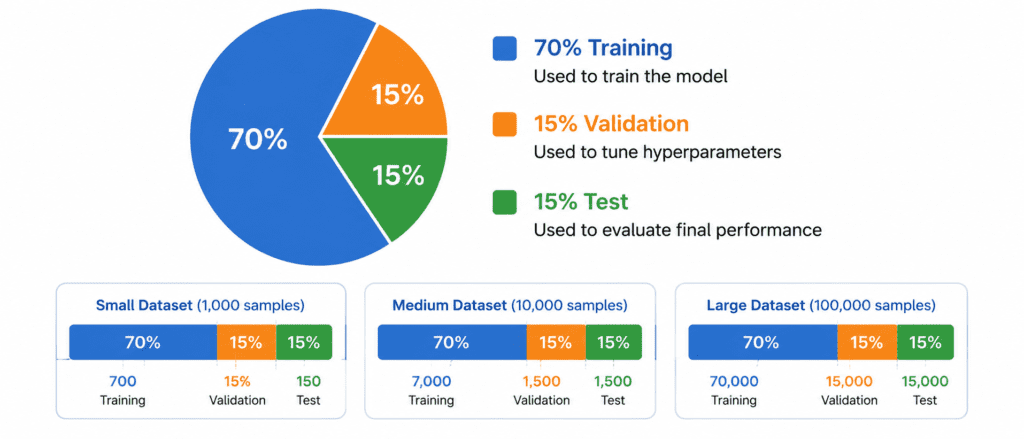

How Much Data for Each Set? #

| Dataset Size | Train % | Validation % | Test % |

|---|---|---|---|

| Small (< 10,000) | 70% | 15% | 15% |

| Medium (10k – 100k) | 80% | 10% | 10% |

| Large (> 100,000) | 98% | 1% | 1% |

Rule of thumb: Keep enough data in validation and test to get reliable scores. At least 1,000 examples each if possible.

Quick Code Example (Scikit-learn)

from sklearn.model_selection import train_test_split

# First split: Separate test set

X_temp, X_test, y_temp, y_test = train_test_split(

X, y, test_size=0.15, random_state=42

)

# Second split: Separate validation from remaining

X_train, X_val, y_train, y_val = train_test_split(

X_temp, y_temp, test_size=0.176, random_state=42

)

# Note: 0.176 of 85% = 15% of original dataSimpler way using numpy:

n = len(X) train_end = int(n * 0.7) val_end = int(n * 0.85) X_train, y_train = X[:train_end], y[:train_end] X_val, y_val = X[train_end:val_end], y[train_end:val_end] X_test, y_test = X[val_end:], y[val_end:]