Cross Validation in Machine Learning is a technique used to evaluate machine learning models more reliably. #

“You split your data into train, validation, and test. But what if your validation set is just lucky? What if it contains easy examples? What if the test set contains hard ones? Your score will be wrong. K-Fold Cross Validation fixes this by testing your model multiple times on different chunks of data instead of relying on a single split.”

The Problem with Single Validation Set #

A single validation set introduces luck.

| Scenario | Problem |

|---|---|

| Easy validation set | Model looks better than it actually is |

| Hard validation set | Model looks worse than it actually is |

| Unrepresentative validation set | Your hyperparameters are wrong for real data |

Example: You are building a digit classifier. By random chance, your validation set has mostly the digit “1” (which is easy to classify). Your model scores 98%. But when deployed, it fails on digit “8”. The validation set lied to you.

Solution: Test your model on multiple different validation sets. Average the results.

What is Cross-Validation? #

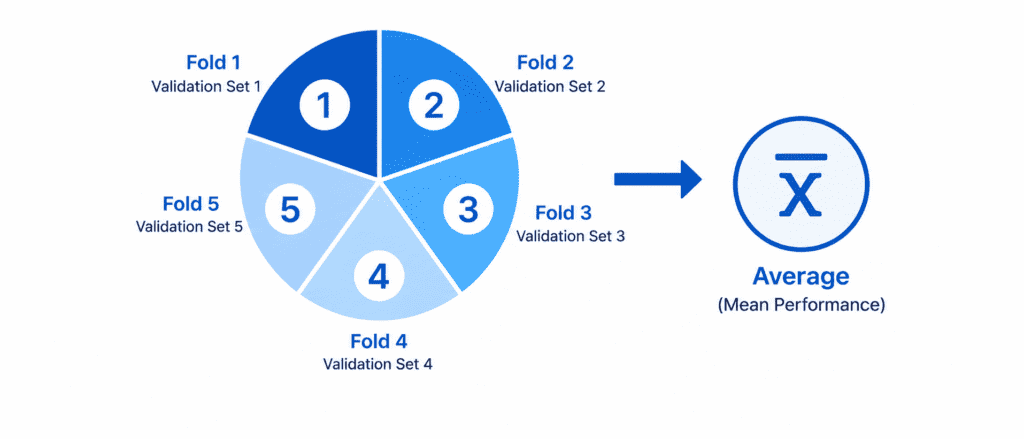

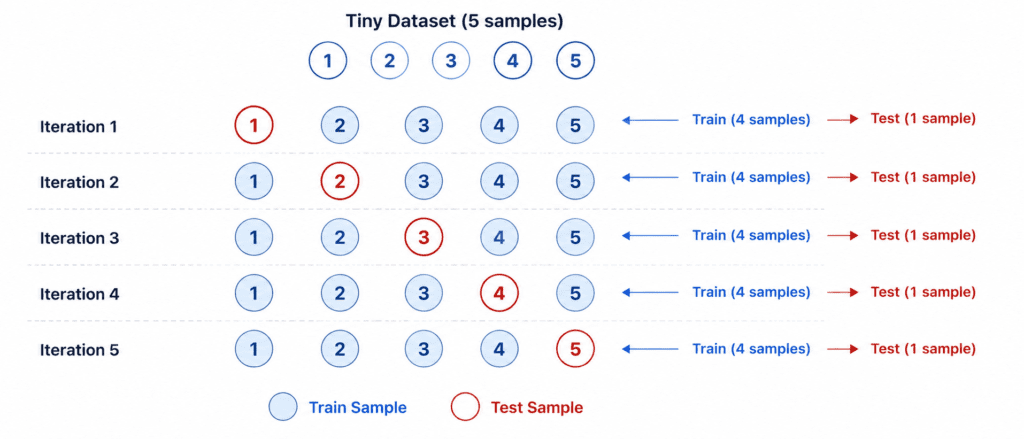

Simple Definition: Split your data into k equal parts. Train on k-1 parts. Validate on the remaining 1 part. Repeat k times. Average the scores.

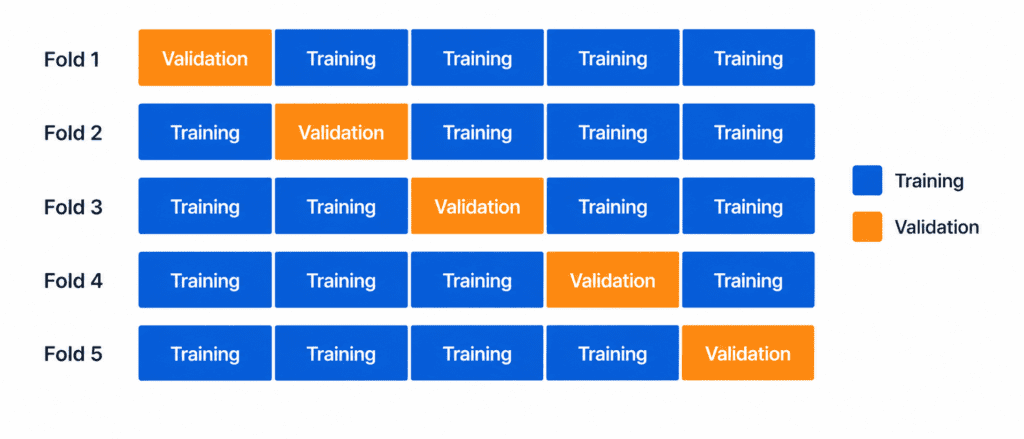

The K-Fold Process:

Fold 1: [VALID] [TRAIN] [TRAIN] [TRAIN] [TRAIN] → Score 1 Fold 2: [TRAIN] [VALID] [TRAIN] [TRAIN] [TRAIN] → Score 2 Fold 3: [TRAIN] [TRAIN] [VALID] [TRAIN] [TRAIN] → Score 3 Fold 4: [TRAIN] [TRAIN] [TRAIN] [VALID] [TRAIN] → Score 4 Fold 5: [TRAIN] [TRAIN] [TRAIN] [TRAIN] [VALID] → Score 5 Final Score = Average(Score 1 + Score 2 + Score 3 + Score 4 + Score 5)

Each data point gets validated exactly once. No data is wasted.

K-Fold Cross-Validation (Standard) #

How it works:

- Shuffle the data randomly

- Split into

kequal-sized folds - For each fold: Train on

k-1folds, validate on the remaining fold - Record the validation score

- After

krounds, compute the average and standard deviation

Choosing k (number of folds):

| k value | Pros | Cons | When to use |

|---|---|---|---|

| k=5 | Fast, less computation | Slightly higher variance | Large datasets (100k+ samples) |

| k=10 | Balanced, standard choice | More computation | Most common default |

| k=20 | Low variance, stable estimates | Slow | Small datasets |

Default recommendation: k=10

from sklearn.model_selection import cross_val_score

from sklearn.ensemble import RandomForestClassifier

model = RandomForestClassifier()

# 10-fold cross-validation

scores = cross_val_score(model, X, y, cv=10)

print(f"Scores: {scores}")

print(f"Mean: {scores.mean():.4f}")

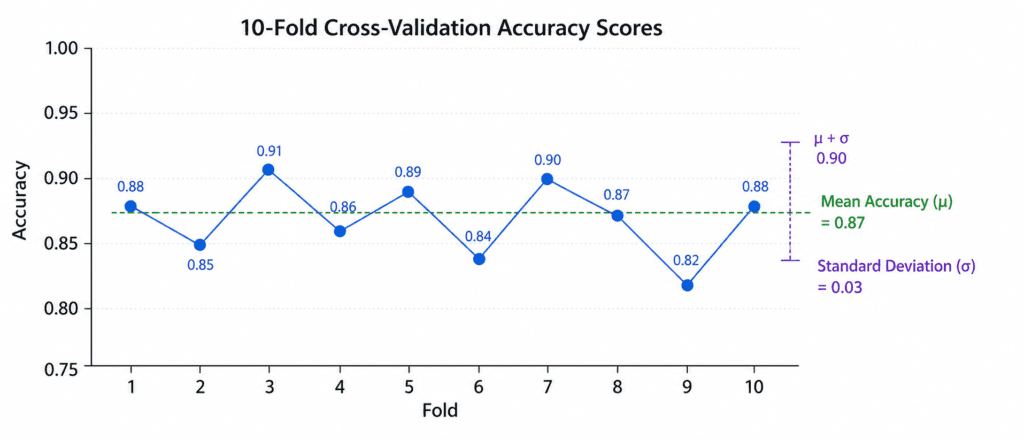

print(f"Std: {scores.std():.4f}")Output interpretation:

- Mean = expected performance on new data

- Standard deviation = how much performance varies across folds

- Large std = model is unstable (depends on which data it sees)

Stratified K-Fold (For Imbalanced Classes) #

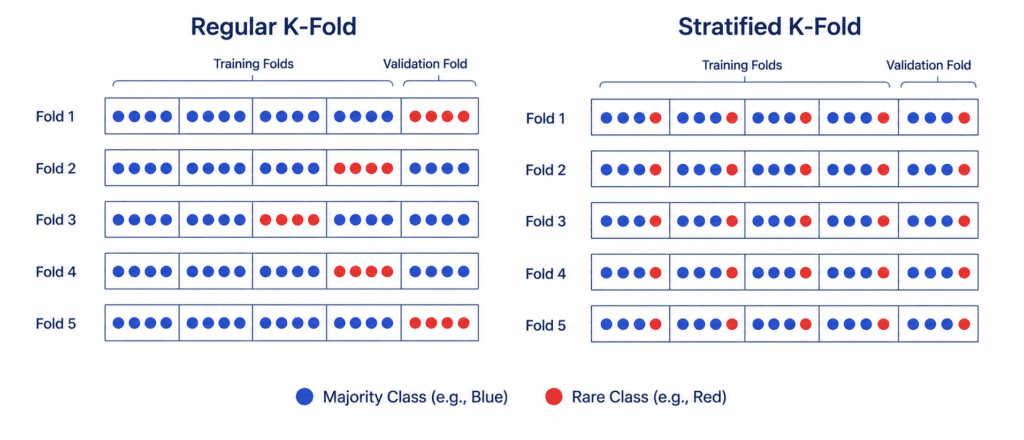

The Problem: Regular K-Fold might put all samples of a rare class in one fold.

Example: You have 90% “No Fraud” and 10% “Fraud”. Random split might put all “Fraud” cases in the validation set of Fold 3. That fold will have 0% training fraud and 100% validation fraud. The score will be terrible.

Solution: Stratified K-Fold preserves the class percentage in every fold.

How it works:

- Each fold has the same % of each class

- If dataset has 90% No Fraud, 10% Fraud → every fold has 90% No Fraud, 10% Fraud

Comparison:

| Method | Class Balance | Best for |

|---|---|---|

| Regular K-Fold | May be unbalanced | Balanced datasets |

| Stratified K-Fold | Maintains balance in every fold | Imbalanced datasets |

from sklearn.model_selection import StratifiedKFold skf = StratifiedKFold(n_splits=10, shuffle=True, random_state=42) scores = cross_val_score(model, X, y, cv=skf)

When to use: Always use Stratified K-Fold for classification problems. It is safer. Only use regular K-Fold for regression.

Leave-One-Out Cross-Validation (LOOCV) #

The Extreme Version: Set k = number of samples. Train on all samples except one. Validate on that one sample. Repeat for every sample.

Example with 100 samples:

- Train on 99 samples, validate on sample 1

- Train on 99 samples (different), validate on sample 2

- Repeat 100 times

Pros and Cons:

| Aspect | Rating | Explanation |

|---|---|---|

| Bias | ✅ Very low | Almost all data used for training each time |

| Variance | ❌ High | Models are very similar, scores are correlated |

| Computation | ❌ Very slow | Must train n models (n = dataset size) |

| When to use | ⚠️ Rarely | Only for very small datasets (< 500 samples) |

from sklearn.model_selection import LeaveOneOut loo = LeaveOneOut() scores = cross_val_score(model, X, y, cv=loo)

Warning: If you have 10,000 samples, LOOCV trains 10,000 models. This could take days. Do not use LOOCV on large datasets.

Comparison Table #

| Method | Number of Models | Computation | Best For |

|---|---|---|---|

| K-Fold (k=10) | 10 models | Fast | Most problems |

| Stratified K-Fold | 10 models | Fast | Classification with imbalanced classes |

| Leave-One-Out | N models | Very Slow | Tiny datasets (< 500 samples) |

When to Use Cross-Validation #

| Use Case | Do you need CV? | Why |

|---|---|---|

| Tuning hyperparameters | ✅ Yes | Need stable estimate of performance |

| Comparing two models | ✅ Yes | Need to know which is truly better |

| Small dataset (< 1,000 samples) | ✅ Yes | Cannot afford a separate validation set |

| Large dataset (> 100,000 samples) | ⚠️ Maybe | Simple train/val split might be enough |

| Final test set evaluation | ❌ No | Test set is only for final check |

Quick Quiz #

Q1: Your dataset has 1,000 samples. You run 10-fold CV. How many models do you train? How many samples in each training set?

A1: 10 models. Each training set has 900 samples (90% of 1,000). Each validation set has 100 samples.

Q2: Your dataset has 90% Class A and 10% Class B. You use regular K-Fold. What could go wrong?

A2: Some fold might accidentally get 0% Class B in training. That model will never learn to predict Class B. Use Stratified K-Fold instead.

Q3: You have 200,000 samples. Should you use Leave-One-Out CV?

A3: No. That would train 200,000 models. It would take weeks. Use 5-fold or 10-fold CV instead.