You trained a model. It gives predictions. But how good are they? Are they close to reality? Far off? Sometimes wrong in a useful way? You need numbers to tell you. These numbers are evaluation metrics. They separate good models from bad ones.

The Setup #

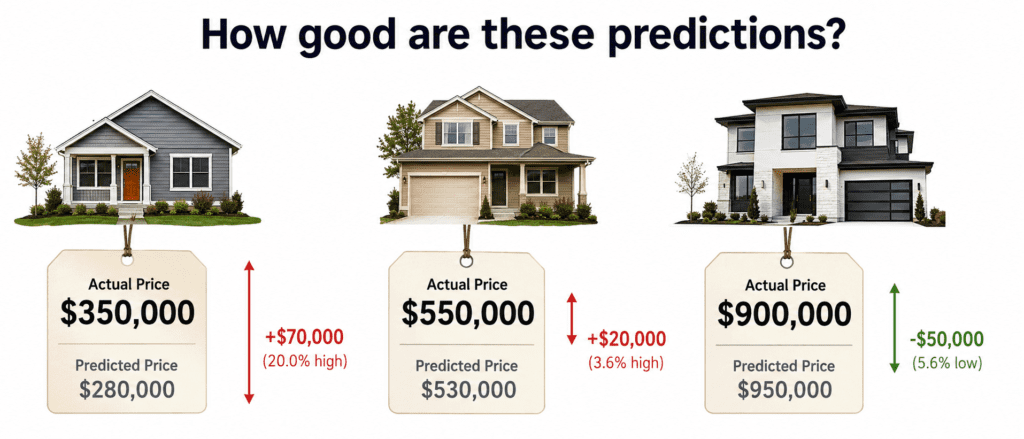

Imagine you are predicting house prices.

| Actual Price | Your Prediction | Error (Actual – Prediction) |

|---|---|---|

| $500,000 | $480,000 | $20,000 (off by 4%) |

| $300,000 | $310,000 | -$10,000 (off by 3.3%) |

| $1,000,000 | $900,000 | $100,000 (off by 10%) |

How do you measure overall performance? One number that summarizes all errors.

The Four Main Metrics #

| Metric | What It Measures | Unit | Sensitive to Outliers? |

|---|---|---|---|

| MAE | Average absolute error | Same as target | No |

| MSE | Average squared error | Squared of target | Yes |

| RMSE | Square root of MSE | Same as target | Yes |

| R² | Proportion of variance explained | 0 to 1 (or negative) | No |

| MAPE | Average percentage error | Percentage | No |

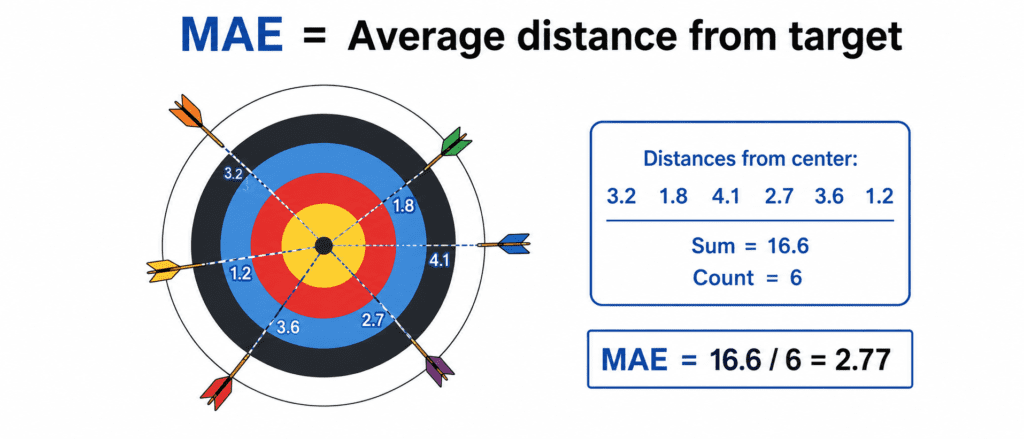

1. MAE (Mean Absolute Error) #

What it is: Average of absolute differences between actual and predicted.

The Formula:

MAE = (1/n) × Σ |actual - prediction|

Example:

| Actual | Prediction | Absolute Error |

|---|---|---|

| 500k | 480k | 20k |

| 300k | 310k | 10k |

| 1000k | 900k | 100k |

MAE = (20 + 10 + 100) / 3 = 43.3k

Interpretation: “On average, my predictions are off by $43,300.”

Pros:

- Easy to understand

- Not sensitive to outliers (a 1Merrorcountsthesameas10x100k errors)

Cons:

- Less mathematically convenient for optimization

When to use: When outliers are expected. When you want intuitive explanation.

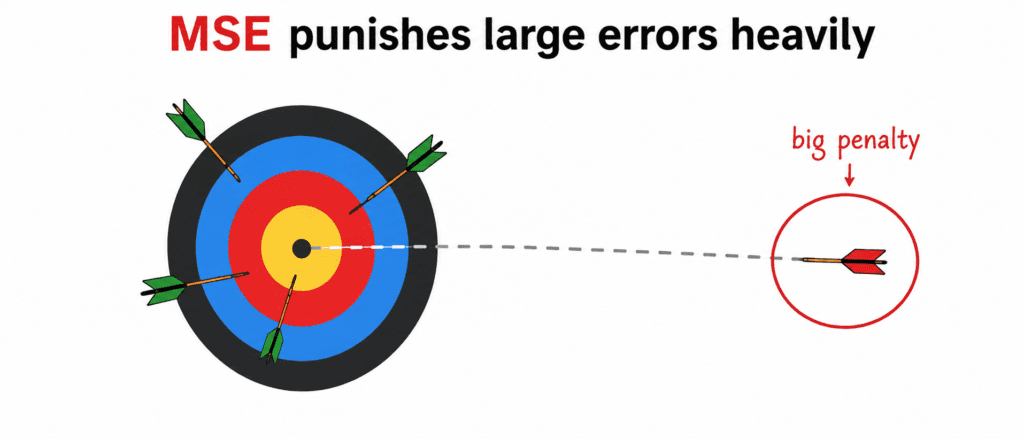

2. MSE (Mean Squared Error) #

What it is: Average of squared differences between actual and predicted.

The Formula:

MSE = (1/n) × Σ (actual - prediction)²

Example:

| Actual | Prediction | Error | Squared Error |

|---|---|---|---|

| 500k | 480k | 20k | 400M |

| 300k | 310k | -10k | 100M |

| 1000k | 900k | 100k | 10,000M |

MSE = (400M + 100M + 10,000M) / 3 = 3,500M

Interpretation: Hard to interpret because units are squared ($²). Not intuitive.

Pros:

- Mathematically convenient (differentiable)

- Heavily penalizes large errors (good for some problems)

Cons:

- Units not interpretable

- Very sensitive to outliers

When to use: When large errors are unacceptable. When you need mathematical convenience for optimization.

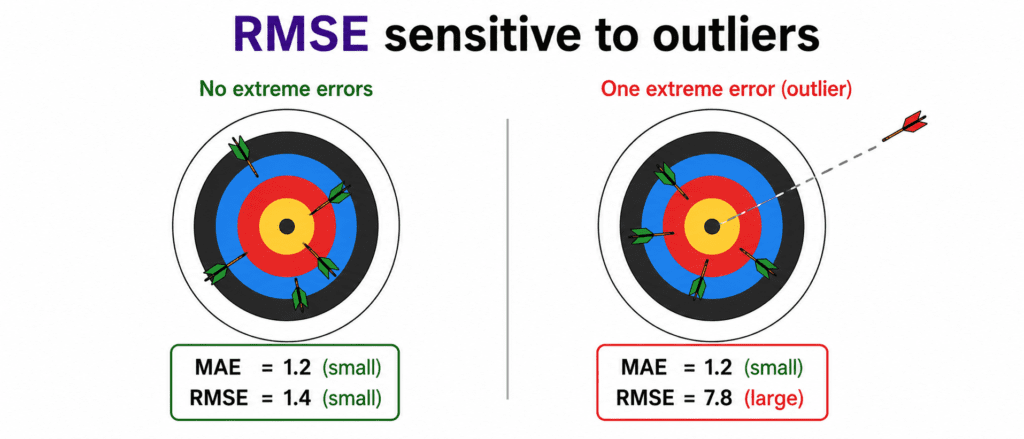

3. RMSE (Root Mean Squared Error) #

What it is: Square root of MSE. Brings units back to original.

The Formula:

RMSE = √MSE

Example:

MSE = 3,500M

RMSE = √3,500M = 59.1k

Interpretation: “On average, my predictions are off by about $59,100.”

Pros:

- Same units as target (interpretable)

- Still penalizes large errors

Cons:

- Still sensitive to outliers

When to use: Most common default for regression. Balance of interpretability and sensitivity.

MAE vs RMSE:

| If Errors Are | MAE and RMSE are |

|---|---|

| All small and similar | Close to each other |

| Mostly small but some huge | RMSE > MAE (sometimes much larger) |

4. R² (R-Squared / Coefficient of Determination) #

What it is: How much better your model is than simply predicting the mean.

The Formula:

R² = 1 - (Σ(actual - prediction)² / Σ(actual - mean)²)

Interpretation:

- R² = 1 → Perfect predictions (error = 0)

- R² = 0 → Model performs like always predicting average

- R² < 0 → Model performs WORSE than predicting average

Example:

| Actual | Mean (500k) | Your Prediction |

|---|---|---|

| 500k | 500k | 480k |

| 300k | 500k | 310k |

| 1000k | 500k | 900k |

Your errors: (20² + 10² + 100²) = 10,500

Mean baseline errors: (0² + 200² + 500²) = 290,000

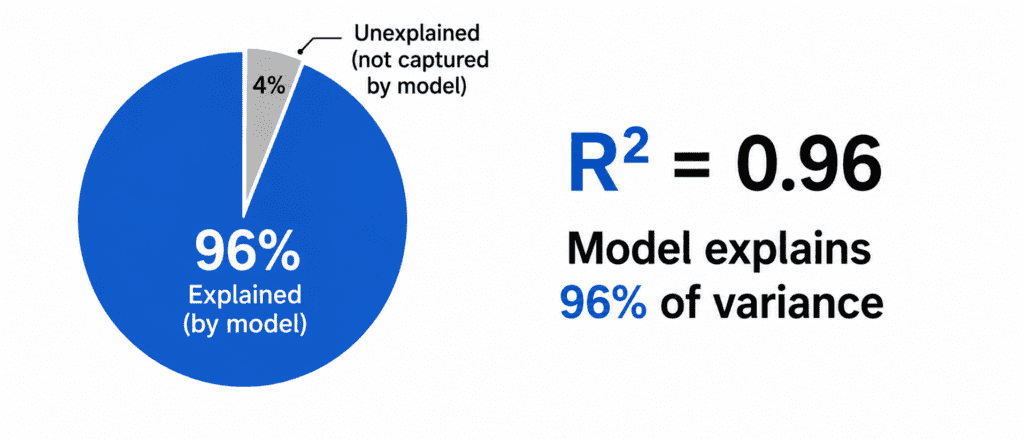

R² = 1 – (10,500 / 290,000) = 0.96

Interpretation: “Your model explains 96% of the variance in house prices.”

Pros:

- Scale-free (always between 0 and 1 for good models)

- Easy to compare across different problems

Cons:

- Can be negative (confusing for beginners)

- Adding useless features always increases R²

When to use: Comparing models across different datasets. Explaining model quality to non-technical people.

5. MAPE (Mean Absolute Percentage Error) #

What it is: Average percentage error.

The Formula:

text

MAPE = (1/n) × Σ |(actual - prediction) / actual| × 100%

Example:

| Actual | Prediction | Percentage Error |

|---|---|---|

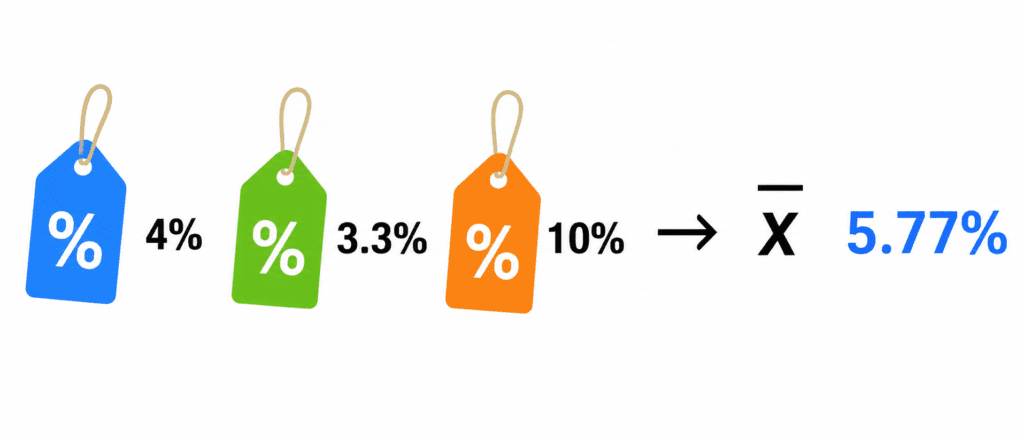

| 500k | 480k | 4% |

| 300k | 310k | 3.3% |

| 1000k | 900k | 10% |

MAPE = (4 + 3.3 + 10) / 3 = 5.77%

Interpretation: “On average, my predictions are off by 5.77%.”

Pros:

- Easy to explain (“off by about X%”)

- Scale-independent

Cons:

- Cannot use when actual values are zero or near zero

- Can be misleading if values are very small

When to use: When errors scale with value size. When explaining to business people.

Complete Code Example

import numpy as np

from sklearn.metrics import mean_absolute_error, mean_squared_error, r2_score

# Actual and predicted values

y_true = [500000, 300000, 1000000]

y_pred = [480000, 310000, 900000]

# Calculate metrics

mae = mean_absolute_error(y_true, y_pred)

mse = mean_squared_error(y_true, y_pred)

rmse = np.sqrt(mse)

r2 = r2_score(y_true, y_pred)

# Manual MAPE

mape = np.mean(np.abs((np.array(y_true) - np.array(y_pred)) / np.array(y_true))) * 100

print(f"MAE: ${mae:,.0f}")

print(f"MSE: ${mse:,.0f}²")

print(f"RMSE: ${rmse:,.0f}")

print(f"R²: {r2:.3f}")

print(f"MAPE: {mape:.1f}%")Output:

MAE: $43,333 MSE: $3,500,000,000² RMSE: $59,161 R²: 0.964 MAPE: 5.8%

Important Note: Train vs Test #

Always evaluate on test set, not training set.

| If you evaluate on | You learn |

|---|---|

| Training set | How well model memorized (useless) |

| Test set | How well model generalizes (real performance) |

Rule: Never look at test metrics until the very end.

Quick Quiz #

Q1: Your RMSE is 50,000.YourMAEis30,000. What does this tell you?

A1: There are some large errors. RMSE > MAE indicates outliers (squared errors dominate).

Q2: Your R² is -0.5. What does this mean?

A2: Your model is worse than simply predicting the average. Something is very wrong.

Q3: You are predicting stock prices. A 10% error is fine. A 100% error is terrible. Which metric?

A3: RMSE or MSE. They penalize large errors more heavily.

Q4: Your actual values include zeros. Can you use MAPE?

A4: No. MAPE divides by actual value. Division by zero is impossible.

Key Takeaways (5 Lines) #

- MAE = Average absolute error. Easy to understand. Not sensitive to outliers.

- RMSE = Default choice. Same units as target. Penalizes large errors.

- R² = How much better than predicting average. 0 to 1 scale (usually).

- MAPE = Average percentage error. Great for business explanations.

- Always evaluate on test set. Never on training set.