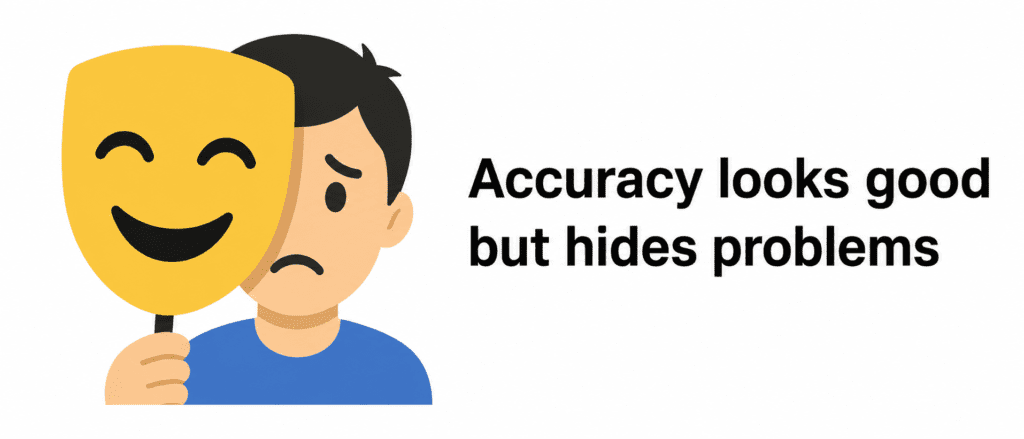

“You built a cancer detection model. It says 99% accurate. Sounds great. But what if only 1% of patients actually have cancer? Your model could predict ‘no cancer’ for everyone and still be 99% accurate. And it would kill people. Accuracy lied. You need better metrics.”

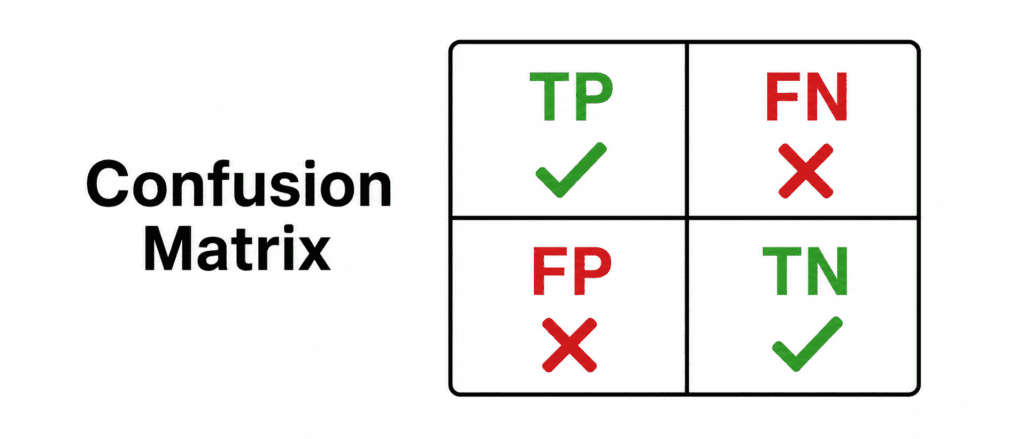

The Confusion Matrix (The Foundation) #

Before metrics, understand this 2×2 table.

| Predicted: YES | Predicted: NO | |

|---|---|---|

| Actual: YES | True Positive (TP) ✅ | False Negative (FN) ❌ |

| Actual: NO | False Positive (FP) ❌ | True Negative (TN) ✅ |

Simple meanings:

| Term | Meaning | Example (Cancer Detection) |

|---|---|---|

| True Positive | Correctly predicted YES | Correctly said “has cancer” |

| True Negative | Correctly predicted NO | Correctly said “no cancer” |

| False Positive | Wrongly predicted YES (Type I error) | Said “has cancer” but healthy |

| False Negative | Wrongly predicted NO (Type II error) | Said “no cancer” but has it |

The Four Main Metrics #

| Metric | What It Measures | Formula | Best For |

|---|---|---|---|

| Accuracy | Overall correctness | (TP+TN)/(Total) | Balanced classes |

| Precision | Trust positive predictions | TP/(TP+FP) | When false positives are costly |

| Recall | Catch all positives | TP/(TP+FN) | When false negatives are costly |

| F1 Score | Balance of precision and recall | 2×(P×R)/(P+R) | Imbalanced classes |

1. Accuracy (The Liar) #

What it is: Proportion of correct predictions (both YES and NO).

The Formula:

Accuracy = (TP + TN) / (TP + TN + FP + FN)

Example (Cancer Detection):

- 990 healthy, 10 sick

- Model predicts “healthy” for everyone

| Predicted Healthy | Predicted Sick | |

|---|---|---|

| Actually Healthy | 990 (TN) | 0 (FP) |

| Actually Sick | 10 (FN) | 0 (TP) |

Accuracy = (990 + 0) / 1000 = 99%

Problem: Model caught ZERO sick patients. But accuracy looks amazing.

When to use Accuracy:

- Classes are balanced (50% Yes, 50% No)

- False positives and false negatives have same cost

When NOT to use Accuracy:

- Imbalanced classes (fraud, disease, rare events)

- Different costs for different errors

2. Precision (When You Say YES, Are You Right?) #

What it is: Of all the times you predicted YES, how many were actually YES?

The Formula:

Precision = TP / (TP + FP)

The Question: “When my model says something is positive, can I trust it?”

Example (Cancer Detection):

Model makes 100 positive predictions. 90 are correct. 10 are wrong.

Precision = 90 / 100 = 90%

Interpretation: “When my model says you have cancer, it is correct 90% of the time.”

When to care about Precision:

| Scenario | Why Precision Matters |

|---|---|

| Spam detection | Marking good email as spam angers users |

| Recommended videos | Showing bad recommendations loses trust |

| Hiring tool | Rejecting good candidates hurts company |

| Fraud alert | False alarms annoy customers |

High Precision = Few false positives.

3. Recall (Did You Catch All the YES?) #

What it is: Of all the actual YES cases, how many did you catch?

The Formula:

Recall = TP / (TP + FN)

The Question: “Did my model miss any real positives?”

Example (Cancer Detection):

There are 100 sick patients. Model catches 80. Misses 20.

Recall = 80 / 100 = 80%

Interpretation: “My model catches 80% of all cancer cases.”

When to care about Recall:

| Scenario | Why Recall Matters |

|---|---|

| Cancer detection | Missing cancer kills people |

| Airport security | Missing a weapon is disaster |

| Fraud detection | Missing fraud loses money |

| Self-driving car | Missing a pedestrian causes accident |

High Recall = Few false negatives.

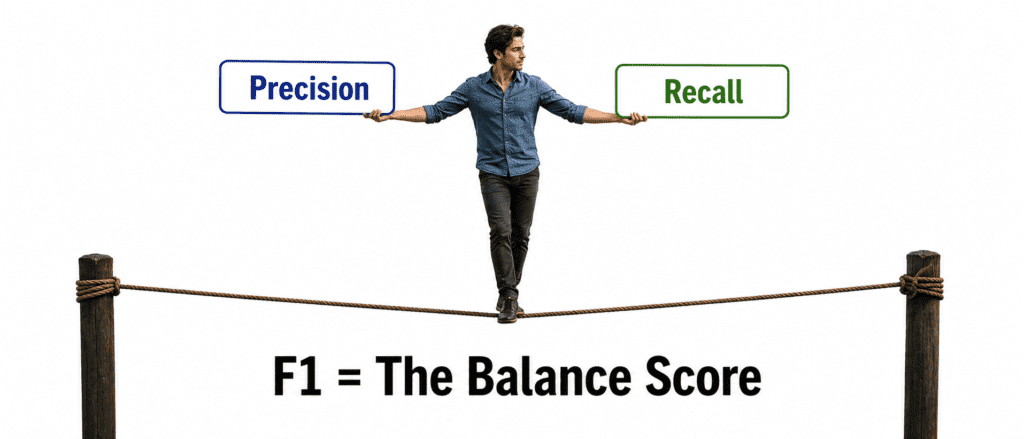

4. F1 Score (The Balance Keeper) #

What it is: Harmonic mean of precision and recall. Balances both.

The Formula:

F1 = 2 × (Precision × Recall) / (Precision + Recall)

Why Harmonic Mean? Regular average lies. Harmonic mean punishes extreme differences.

Example:

| Model A | Precision | Recall | Regular Average | F1 Score |

|---|---|---|---|---|

| High Precision, Low Recall | 99% | 50% | 74.5% | 66% |

| Balanced | 80% | 80% | 80% | 80% |

Regular average says 74.5% for the unbalanced model. That is misleading. F1 correctly shows it is worse (66%).

Interpretation: “My model balances catching positives and being correct when it does.”

When to use F1 Score:

- Imbalanced datasets (always use F1, not accuracy)

- When you care about both precision and recall

- When you need one number to compare models

The Precision-Recall Trade-off #

You cannot have both high precision and high recall. Choose based on your problem.

| If You Increase | Precision Does | Recall Does |

|---|---|---|

| Threshold for positive | ↑ Increases | ↓ Decreases |

| Model complexity | ↓ Decreases | ↑ Increases |

Real Example: Cancer Detection

| Strategy | Precision | Recall | Result |

|---|---|---|---|

| Aggressive (call many sick) | Low (50%) | High (95%) | Many false alarms, but catch most cancer |

| Conservative (only sure cases) | High (99%) | Low (60%) | Few false alarms, but miss many cancer |

Which is better? Depends on cost.

- Missing cancer (low recall) = patient dies → High recall needed

- False alarm (low precision) = patient stressed but alive → Lower priority

The Trade-off Summary Table #

| Metric | What It Rewards | What It Ignores |

|---|---|---|

| Accuracy | Getting both right | Class imbalance |

| Precision | Being right when you say YES | Missing actual YES cases |

| Recall | Catching all YES cases | Being wrong sometimes |

| F1 | Balance of both | Neither extreme |

from sklearn.metrics import accuracy_score, precision_score, recall_score, f1_score, confusion_matrix

# Actual and predicted

y_true = [0, 0, 0, 0, 0, 0, 0, 0, 0, 0, # 10 not spam (0)

1, 1, 1, 1, 1, 1, 1, 1, 1, 1] # 10 spam (1)

y_pred = [0, 0, 0, 0, 0, 0, 0, 0, 0, 0, # 10 correct not spam

1, 1, 1, 1, 1, 1, 0, 0, 0, 0] # 6 correct spam, 4 missed

# Calculate metrics

accuracy = accuracy_score(y_true, y_pred)

precision = precision_score(y_true, y_pred)

recall = recall_score(y_true, y_pred)

f1 = f1_score(y_true, y_pred)

cm = confusion_matrix(y_true, y_pred)

print(f"Accuracy: {accuracy:.2f}")

print(f"Precision: {precision:.2f}")

print(f"Recall: {recall:.2f}")

print(f"F1 Score: {f1:.2f}")

print(f"\nConfusion Matrix:")

print(f"TN: {cm[0,0]}, FP: {cm[0,1]}")

print(f"FN: {cm[1,0]}, TP: {cm[1,1]}")Output:

Accuracy: 0.80 Precision: 1.00 Recall: 0.60 F1 Score: 0.75 Confusion Matrix: TN: 10, FP: 0 FN: 4, TP: 6

Quick Quiz #

Q1: Your model has 99% accuracy but 1% recall. What is happening?

A1: Severe class imbalance. Model predicts majority class only. Catches almost no positives.

Q2: You are building a fraud detection system. False alarms annoy customers. Missing fraud loses money. Which is more costly?

A2: Depends on business. Usually missing fraud (low recall) costs more. But both matter. Use F1.

Q3: Your precision is 50%, recall is 100%. What does this mean?

A3: You catch every positive (recall=100%). But half your positive predictions are wrong (precision=50%). You are over-predicting.

Q4: Precision = 90%, Recall = 90%. What is F1?

A4: F1 = 90% (same as both). When precision = recall, F1 equals that value.

Q5: Why not use accuracy for cancer detection?

A5: Cancer is rare (imbalanced). A model predicting “no cancer” for everyone gets high accuracy but kills patients.