“Your model says ‘SPAM’. But was it right? Your model says ‘NOT SPAM’. Was it correct? You need a scorecard. Not just one number. A full report of where your model wins and where it fails. That report is the Confusion Matrix.”

What Is a Confusion Matrix? #

A 2×2 table that shows exactly what your model got right and wrong.

The Table:

| Predicted: YES | Predicted: NO | |

|---|---|---|

| Actual: YES | TP (Hit) | FN (Miss) |

| Actual: NO | FP (False Alarm) | TN (Correct Reject) |

Four numbers. That is it.

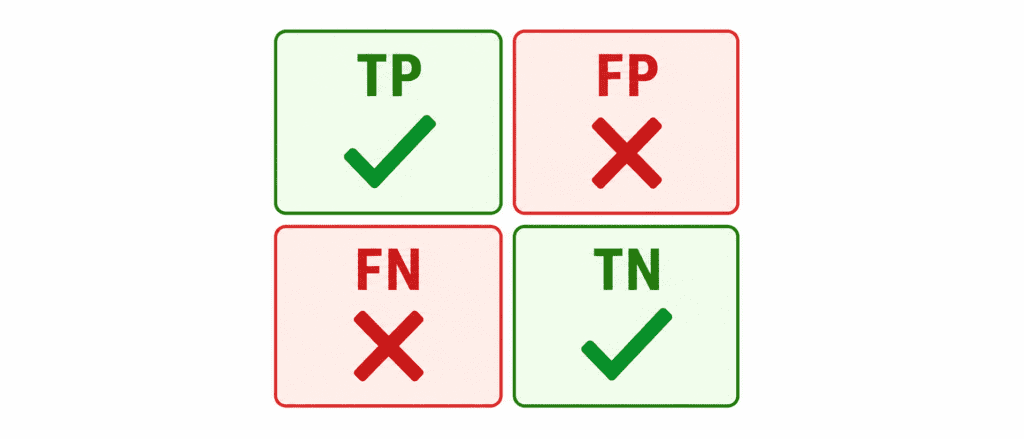

The Four Numbers (Learn These) #

| Name | Plain English | Another Name |

|---|---|---|

| TP (True Positive) | Said YES, was YES | Hit |

| TN (True Negative) | Said NO, was NO | Correct Reject |

| FP (False Positive) | Said YES, was NO | Type I Error, False Alarm |

| FN (False Negative) | Said NO, was YES | Type II Error, Miss |

Memory Trick:

- “True” = Model was correct

- “False” = Model was wrong

- “Positive” = Model said YES

- “Negative” = Model said NO

So “False Positive” = Model was wrong when it said YES.

Real Example: Cancer Test #

1000 patients tested. 100 actually have cancer. 900 are healthy.

Model Results:

| Predicted Cancer | Predicted Healthy | |

|---|---|---|

| Actually Cancer | 80 (TP) | 20 (FN) |

| Actually Healthy | 30 (FP) | 870 (TN) |

Read the matrix:

- TP = 80 → Correctly caught 80 cancer patients

- FN = 20 → Missed 20 cancer patients (dangerous!)

- FP = 30 → Told 30 healthy people they have cancer (stressful)

- TN = 870 → Correctly told 870 healthy people they are fine

One Real Example #

Cancer test. 100 sick people. 900 healthy.

Model results:

- Found 80 sick correctly (TP)

- Missed 20 sick (FN)

- Told 30 healthy they have cancer (FP)

- Told 870 healthy they are fine (TN)

That is it. The whole matrix.

Why Bother? #

One number like Accuracy hides the truth.

Accuracy says 95% (870+80 = 950 correct out of 1000).

But you missed 20 sick people. That could kill someone.

Confusion matrix shows you the problem.

Every Metric Comes From Here #

| You Want | Look At |

|---|---|

| Correct predictions | TP + TN |

| False alarms | FP |

| Missed catches | FN |

| Trust when model says YES | TP / (TP + FP) → Precision |

| How many caught | TP / (TP + FN) → Recall |

One Line of Code #

from sklearn.metrics import confusion_matrix print(confusion_matrix(y_true, y_pred))

Key Takeaways (3 Lines) #

- Confusion matrix shows exactly what your model got right and wrong.

- Two errors matter: False alarms (FP) and Missed catches (FN).

- Always check the matrix before trusting accuracy.