Feature Selection in Machine Learning #

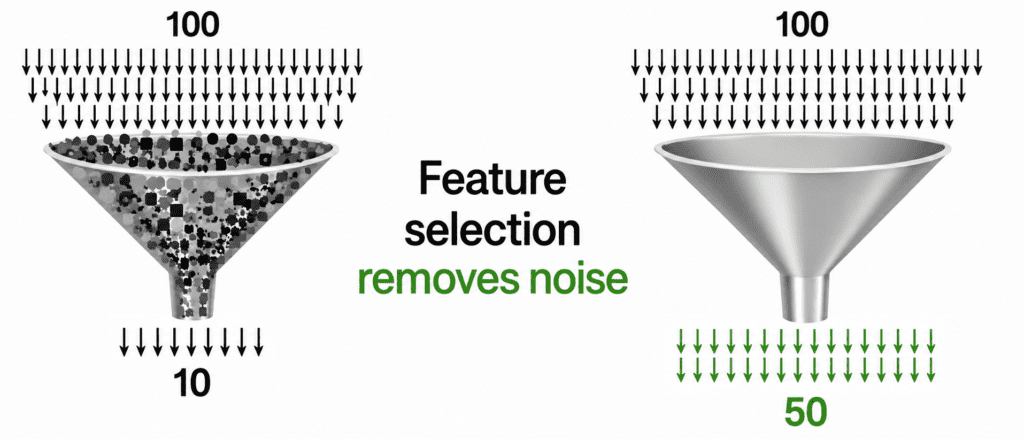

“More features does not mean better model. Extra features add noise. They slow down training. They cause overfitting. Feature selection is about keeping what matters and throwing away the rest.”

Why Feature Selection? #

| Problem | Without Selection | With Selection |

|---|---|---|

| Training time | Slow | Fast |

| Overfitting | High risk | Low risk |

| Model complexity | High | Low |

| Interpretability | Hard | Easy |

Simple truth: A model with 10 good features beats a model with 100 average features.

The Three Main Methods #

| Method | How it works | Speed | Accuracy |

|---|---|---|---|

| Filter | Statistical tests before training | Very Fast | Moderate |

| Wrapper | Train model repeatedly to test features | Slow | High |

| Embedded | Model selects features during training | Medium | High |

Method 1: Filter Methods (Fastest) #

How it works: You rank features by a statistical score. Keep top k features. No model training involved.

Common filter techniques:

| Technique | What it measures | Best for |

|---|---|---|

| Correlation | Linear relationship with target | Regression |

| Chi-Square | Dependence between variables | Classification |

| Mutual Information | Any relationship (linear or not) | Both |

| Variance Threshold | Remove constant or near-constant features | Both |

from sklearn.feature_selection import SelectKBest, f_classif, mutual_info_classif # Keep top 10 features based on ANOVA F-test selector = SelectKBest(f_classif, k=10) X_selected = selector.fit_transform(X, y) # Keep features with variance above threshold from sklearn.feature_selection import VarianceThreshold selector = VarianceThreshold(threshold=0.01) X_selected = selector.fit_transform(X)

Pros: Very fast. Works as preprocessing step.

Cons: Ignores feature interactions. A feature might be useless alone but powerful when combined.

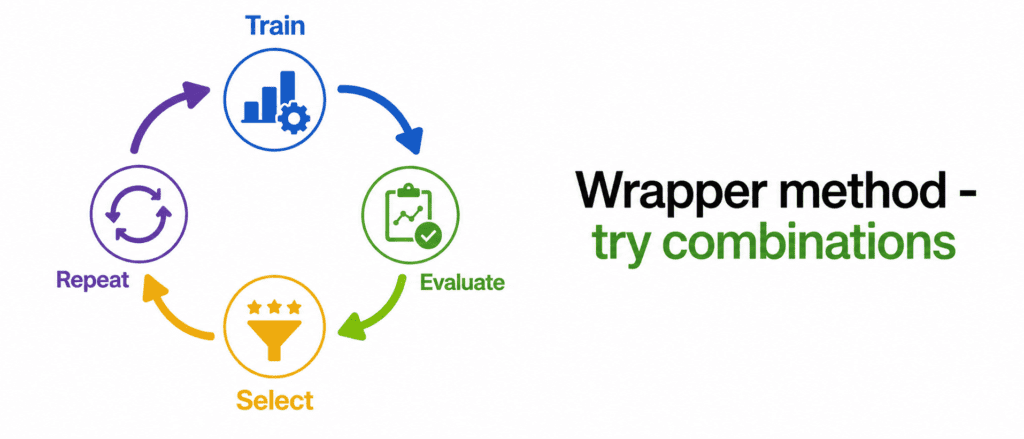

Method 2: Wrapper Methods (Most Accurate) #

How it works: You train a model multiple times with different feature combinations. Keep the combination that gives best score.

Common wrapper techniques:

| Technique | How it works | Complexity |

|---|---|---|

| Forward Selection | Start empty, add best feature one by one | O(n²) |

| Backward Elimination | Start with all, remove worst feature one by one | O(n²) |

| Recursive Elimination (RFE) | Train model, remove weakest features, repeat | O(n²) |

from sklearn.feature_selection import RFE from sklearn.ensemble import RandomForestClassifier # Recursive Feature Elimination estimator = RandomForestClassifier() selector = RFE(estimator, n_features_to_select=10) selector.fit(X, y) # Selected features selected_mask = selector.support_

Pros: Finds best combination. Considers feature interactions.

Cons: Slow. Can overfit if not careful.

Warning: Do not use wrapper methods with 1000+ features. It will take forever.

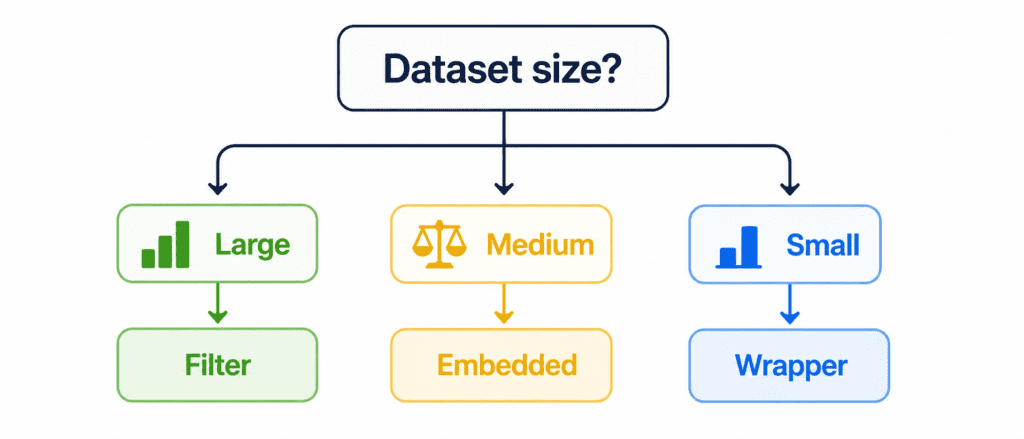

Which Method Should You Choose? #

| Your Situation | Best Method | Why |

|---|---|---|

| Very large dataset (10k+ features) | Filter | Fastest, good enough |

| Small dataset (< 500 features) | Wrapper (RFE) | Most accurate |

| You want simplicity | Embedded (Random Forest importance) | Easy, works well |

| You have linear data | Embedded (Lasso) | Forces zeros, removes features |

| You need quick baseline | Filter (SelectKBest) | Fast, no training |

Default recommendation: Start with Random Forest feature importance (Embedded). If still too many features, use SelectKBest (Filter) to reduce further.

The Golden Rule #

Feature selection must be done on training data only. Then apply same selection to validation and test.

Correct way:

selector.fit(X_train, y_train) X_train_selected = selector.transform(X_train) X_val_selected = selector.transform(X_val) X_test_selected = selector.transform(X_test)

Wrong way:

# DO NOT DO THIS - data leakage selector.fit(X_train, y_train) selector.fit(X_val, y_val) # WRONG!

How Many Features to Keep? #

No fixed answer. General guidelines:

| Total Features | Keep (roughly) |

|---|---|

| < 50 | Keep all, no selection needed |

| 50 – 500 | Keep top 50-100 |

| 500 – 5000 | Keep top 100-200 |

| 5000+ | Keep top 200-300 |

Better approach: Plot feature importance. Look for “elbow”. Keep features before importance drops sharply.

Quick Example (Complete Picture) #

Problem: 500 features. Want to reduce to 50.

Step 1 (Filter – Fast reduction):

selector = SelectKBest(mutual_info_classif, k=100) X_selected = selector.fit_transform(X, y)

Step 2 (Embedded – Refine further):

rf = RandomForestClassifier() rf.fit(X_selected, y) importances = rf.feature_importances_ # Keep top 50 based on importance

Result: 500 features → 100 → 50 good features.

Quick Quiz #

Q1: You have 10,000 features and 5,000 samples. Which method should you use first?

A1: Filter method (like SelectKBest). It is fast and will reduce to manageable size quickly.

Q2: You want features that are important for a Random Forest model. Which method?

A2: Embedded method using Random Forest’s feature_importances_.

Q3: Which method actually sets some feature weights to zero?

A3: Lasso regression (L1 regularization) from Embedded methods.

Q4: You ran RFE and it took 2 days to complete. What went wrong?

A4: Too many features. Use Filter method first to reduce, then RFE.

Key Takeaways (5 Lines) #

- Filter = Statistical test before training. Fastest. Good for large datasets.

- Wrapper = Train model repeatedly. Most accurate. Use for small datasets.

- Embedded = Model selects during training. Best balance. Start here.

- Always fit selector on training data only. Never on validation or test.

- Fewer good features > Many bad features. Feature selection prevents overfitting.