Feature Engineering for ML #

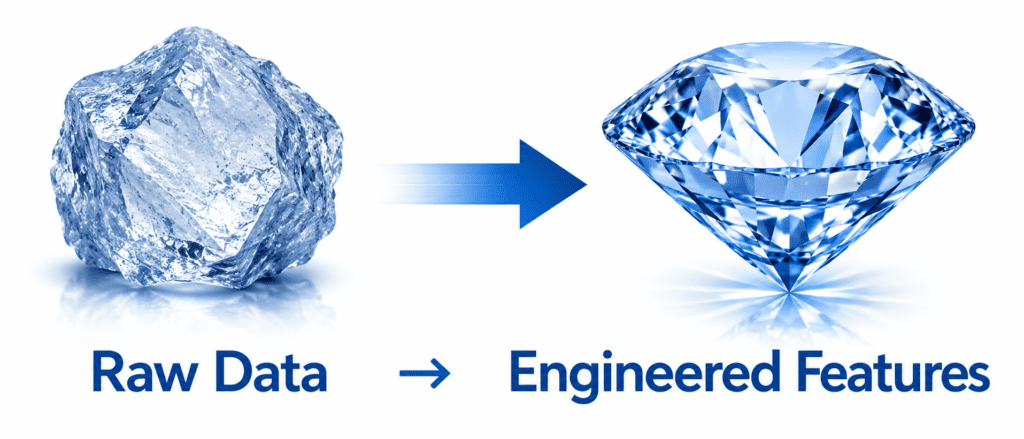

Are the most important part of building high-performing models. Your model is only as good as the features you give it. A bad feature is like giving a detective the wrong clues. A good feature makes the answer obvious. Feature engineering is the art of creating those good clues from raw data.

What is Feature Engineering? #

Simple Definition: Creating new features from existing data that help your model predict better.

Raw data is rarely ready to use. You must transform it. You must combine it. You must extract hidden information from it.

Example: You have a “date” column. Raw date is useless. But from it you can create:

- Day of week (Monday=1, Tuesday=2)

- Is weekend? (0 or 1)

- Month of year

- Days since last holiday

- Quarter of year

These new features help your model find patterns.

Why Feature Engineering Matters #

Here is the truth:

| Factor | Impact on Model Performance |

|---|---|

| Algorithm choice | 10-20% impact |

| Hyperparameter tuning | 5-10% impact |

| Amount of data | 20-30% impact |

| Feature engineering | 40-60% impact |

Yes. Feature engineering matters more than which algorithm you pick. A simple model with great features beats a complex model with bad features.

The Golden Rule #

“Know your data. Domain knowledge is feature engineering superpower.”

A doctor knows which medical measurements matter. A finance expert knows which ratios predict default. No algorithm can replace that knowledge.

Common Feature Engineering Techniques #

1. Handling Date and Time #

Raw date is almost useless to a model. Extract these instead:

| Raw Date | Engineered Features |

|---|---|

| 2024-03-15 | Year=2024, Month=3, Day=15, DayOfWeek=Friday, IsWeekend=True, Quarter=1, DaysSinceStartOfYear=74 |

Example: Predicting store sales.

Bad feature: “Date” (model cannot understand)

Good feature: “IsWeekend” (sales are higher on weekends)

Good feature: “MonthsSinceLastHoliday” (sales drop after holidays)

import pandas as pd df['date'] = pd.to_datetime(df['date']) df['day_of_week'] = df['date'].dt.dayofweek # Monday=0, Sunday=6 df['is_weekend'] = df['day_of_week'].isin([5, 6]).astype(int) df['month'] = df['date'].dt.month df['quarter'] = df['date'].dt.quarter df['year'] = df['date'].dt.year

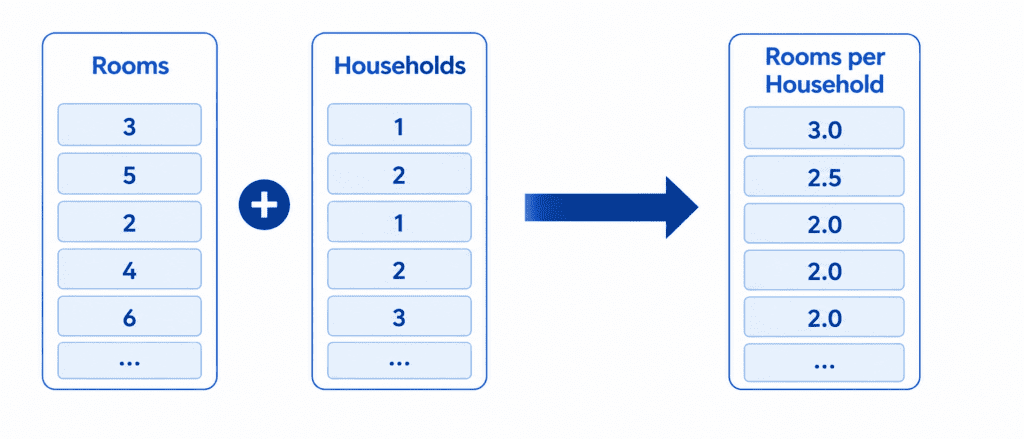

2. Creating Ratios and Combinations #

Two features together often tell a better story than each alone.

Example: Predicting house prices.

| Bad Features | Engineered Feature |

|---|---|

| Total Rooms, Households | Rooms per Household |

| Total Bedrooms, Total Rooms | Bedroom Ratio |

| Population, Households | People per Household |

Why it works: A house with 10 rooms is big. A house with 10 rooms and 1 household is a mansion. A house with 10 rooms and 10 households is a crowded apartment. Same rooms. Different meaning.

df['rooms_per_house'] = df['total_rooms'] / df['households'] df['bedroom_ratio'] = df['total_bedrooms'] / df['total_rooms'] df['people_per_house'] = df['population'] / df['households']

3. Binning (Grouping Similar Values) #

Instead of using exact numbers, group them into categories.

Example: Age.

Raw age: 25, 32, 18, 65, 70, 22

Binned age: Young (18-30), Middle (31-50), Senior (51+)

Why it works: Model sees patterns more clearly. Buying habits differ by age group, not exact age.

df['age_group'] = pd.cut(df['age'],

bins=[0, 18, 30, 50, 100],

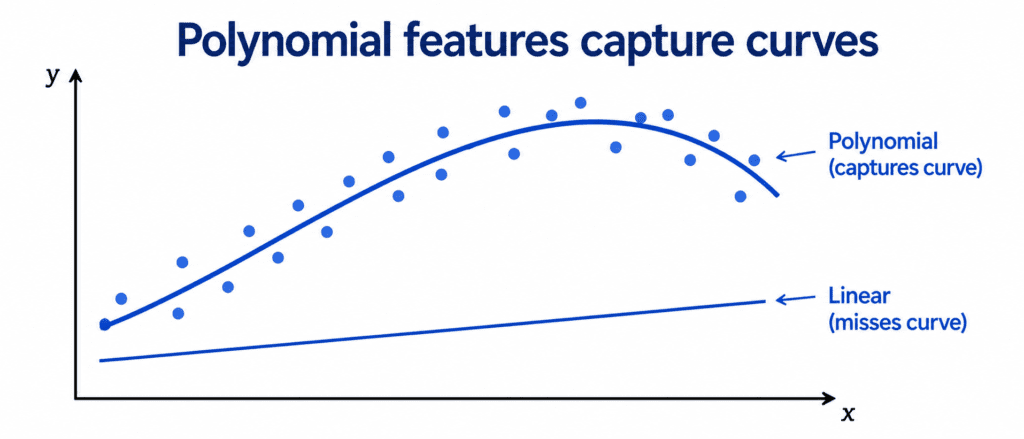

labels=['Child', 'Young', 'Middle', 'Senior'])4. Polynomial Features (Capturing Non-Linearity) #

Sometimes the relationship is curved, not straight. Polynomial features capture curves.

Example: House price vs house size.

A 1000 sq ft house costs 100k.A2000sqfthousecosts200k. A 3000 sq ft house costs 450k(not300k). The relationship is curved. Adding “size squared” helps capture this curve.

from sklearn.preprocessing import PolynomialFeatures poly = PolynomialFeatures(degree=2, include_bias=False) X_poly = poly.fit_transform(X[['size']]) # Creates: size, size^2

Warning: Too many polynomial features can overfit. Use carefully.

Quick Quiz #

Q1: You have a “timestamp” column. What are three features you can extract?

A1: Hour of day, day of week, is weekend, month, quarter, year (any three).

Q2: You have “total_bathrooms” and “total_bedrooms”. What combination feature could you create?

A2: Bathroom to bedroom ratio. Or total_rooms = bathrooms + bedrooms.

Q3: You are predicting house price. Why is “neighborhood average price” a good feature?

A3: Because houses in expensive neighborhoods tend to be expensive themselves. The neighborhood matters.

Q4: You add polynomial features and validation score drops. What happened?

A4: Overfitting. Polynomial features added too much complexity. Reduce degree or add regularization.