Handling Imbalanced Datasets in Machine Learning #

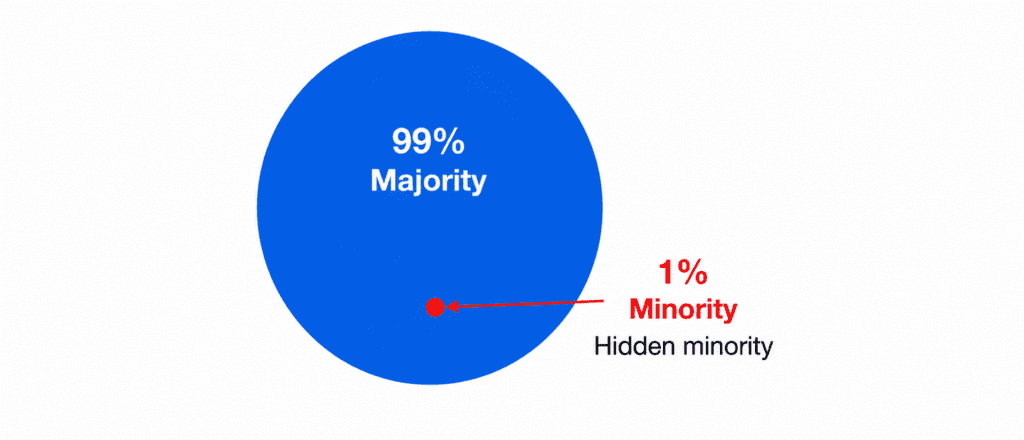

Is one of the most common problems in classification tasks. You build a fraud detection model. It says 99% accurate. You celebrate. Then you realize: only 1% of transactions are fraud. Your model just predicted “Not Fraud” for everything. It learned nothing. This is the hidden trap of imbalanced datasets.

What is an Imbalanced Dataset? #

Simply put: one class has way more examples than the other.

Real life examples:

- Fraud detection: 99% normal, 1% fraud

- Disease detection: 98% healthy, 2% sick

- Rare event prediction: 99.9% no event, 0.1% event

The problem: Your model becomes lazy. It learns to predict the majority class every time. Accuracy looks great. But the model is useless.

Why Accuracy Lies to You #

Imagine 1000 transactions. 990 normal. 10 fraud.

A dumb model that always says “normal” gets 99% accuracy. But it catches ZERO fraud.

Better metrics for imbalanced data:

- Precision: Of the ones you called fraud, how many were actually fraud?

- Recall: Of all actual fraud, how many did you catch?

- F1 Score: Balance between precision and recall

These tell the real story. Not accuracy.

Three Ways to Fix Imbalanced Data #

| Method | One Line Explanation |

|---|---|

| class_weight | Tell model “pay more attention to rare class” |

| Undersampling | Throw away some majority examples |

| SMOTE | Create fake minority examples |

Method 1: class_weight (Easiest) #

You just add one line of code. The model automatically cares more about the minority class.

How it thinks: Missing a fraud is 100x worse than wrongly flagging a normal transaction.

model = RandomForestClassifier(class_weight='balanced') model.fit(X, y)

When to use: Almost always. Start here. It is the easiest and often works well.

Downside: Does not create new data. Only changes how model learns.

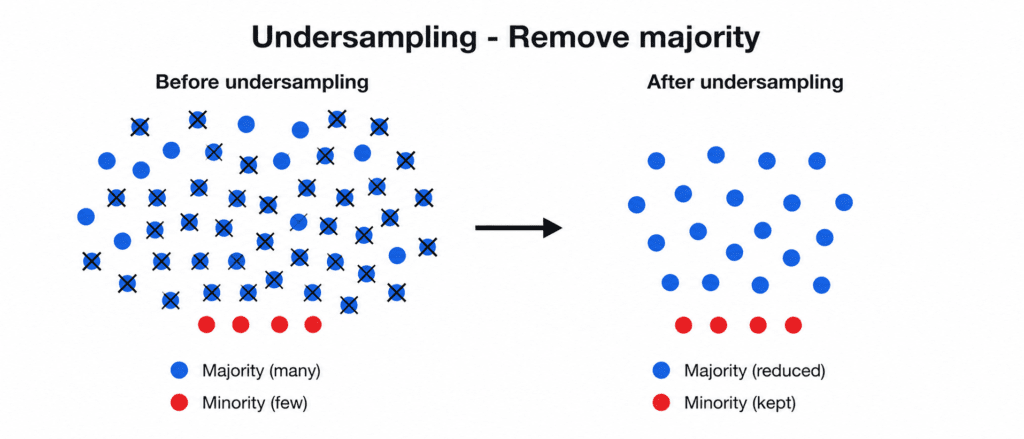

Method 2: Undersampling (Delete Data) #

You randomly delete majority examples until both classes are equal.

Example: 990 normal, 10 fraud → Delete 980 normal → Keep 10 normal, 10 fraud

from sklearn.utils import resample # Keep all fraud, take same number of normal randomly normal_under = resample(normal, n_samples=len(fraud), random_state=42) balanced = pd.concat([normal_under, fraud])

When to use: When you have millions of examples. Deleting some is fine.

Downside: You lose data. Model sees less variety.

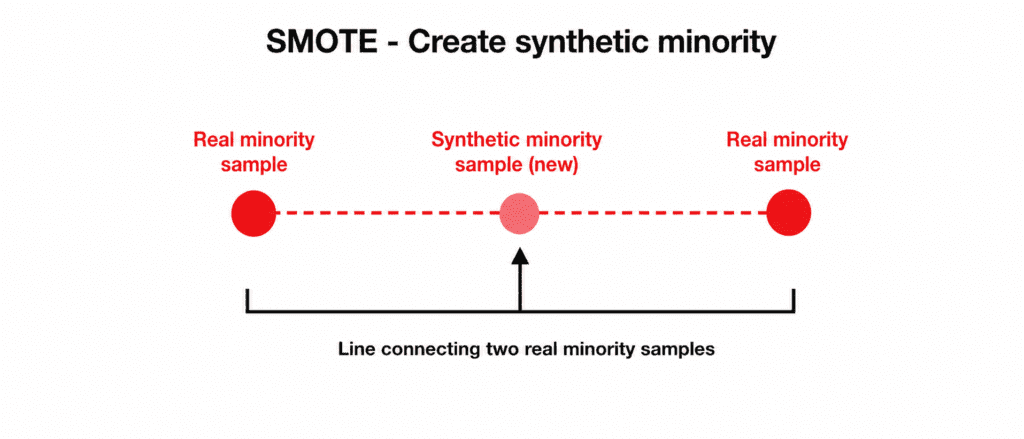

Method 3: SMOTE (Create Fake Data) #

You create brand new synthetic minority examples.

How it works: Take two real fraud examples. Draw a line between them. Pick a random point on that line. That is your new fake fraud example.

Example: 10 fraud → Create 980 fake fraud → Now 990 fraud, 990 normal

from imblearn.over_sampling import SMOTE smote = SMOTE(random_state=42) X_balanced, y_balanced = smote.fit_resample(X, y)

When to use: Small dataset. Cannot afford to lose data.

Downside: Can create unrealistic examples if not careful.

Which One Should You Choose? #

| Your Situation | Best Method |

|---|---|

| You want quick and easy | class_weight |

| You have millions of data | Undersampling |

| You have very little data | SMOTE |

| You are using XGBoost | class_weight (built-in) |

| You are using Neural Network | SMOTE + class_weight |

Simple rule: Start with class_weight. If that fails, try SMOTE. Use undersampling only for huge datasets.

Complete Picture

Problem: Detect fraud. 10,000 transactions. 100 fraud (1%).

Step 1: Train model with no balancing.

- Result: 99% accuracy. Catches 5% of fraud. ❌ Fails.

Step 2: Add class_weight=’balanced’

- Result: Catches 60% of fraud. ✅ Much better.

Step 3: Try SMOTE

- Result: Catches 75% of fraud. ✅ Even better.

Step 4: Combine SMOTE + class_weight

- Result: Catches 78% of fraud. ✅ Best.

Important Note #

Balancing data does not guarantee success. If fraud looks exactly like normal transactions, no method will work. You need good features first.

Quick Quiz #

Q1: Your model has 98% accuracy but catches only 10% of fraud. What is wrong?

A1: Imbalanced dataset. Accuracy is lying. Check precision and recall instead.

Q2: You have 1 million normal and 1000 fraud. Which method is fastest?

A2: Undersampling. Delete 999,000 normal. Keep 1000 each.

Q3: You have 500 normal and 500 fraud. Is your dataset balanced?

A3: Yes. Perfect balance 1:1. No need for these methods.

Q4: Which method creates brand new fake data?

A4: SMOTE. It creates synthetic minority examples.