Bias Variance Tradeoff #

Is one of the most important concepts in machine learning. Every machine learning model makes mistakes—but why? Usually, there are two reasons: either the model is too simple to understand the data, or it is too sensitive to small changes. You cannot fix both completely. You must choose the right balance. Master Bias Variance Tradeoff, and you master machine learning.

The Core Idea (One Paragraph) #

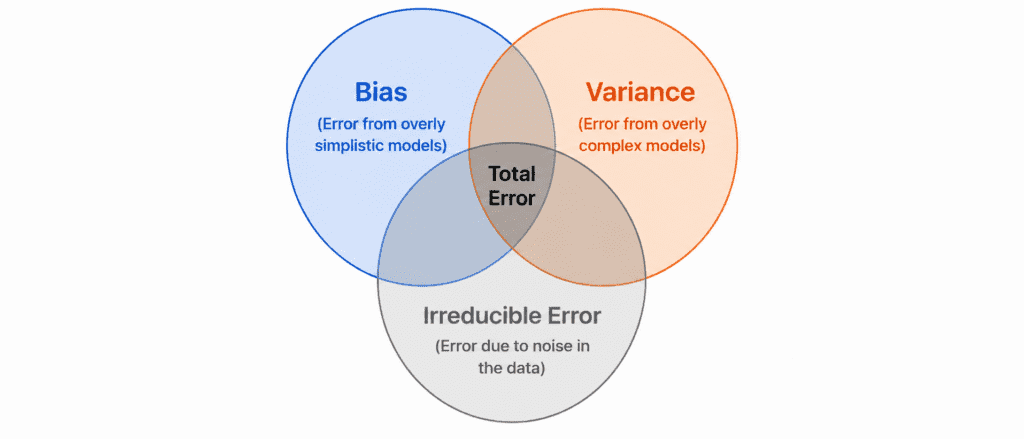

When your model makes a prediction error, that error comes from three sources. One is unavoidable (noise in the data). The other two are under your control. Bias is the error from wrong assumptions. Variance is the error from sensitivity to training data. You cannot reduce both at the same time. Lowering one increases the other. Your job is to find the sweet spot.

The Three Sources of Error #

| Error Type | Source | Can we fix? |

|---|---|---|

| Bias | Wrong assumptions about the data | ✅ Yes |

| Variance | Sensitivity to training data fluctuations | ✅ Yes |

| Irreducible Error | Noise in the data itself (always present) | ❌ No |

Total Error = Bias² + Variance + Irreducible Error

Understanding Bias (Simple Explanation) #

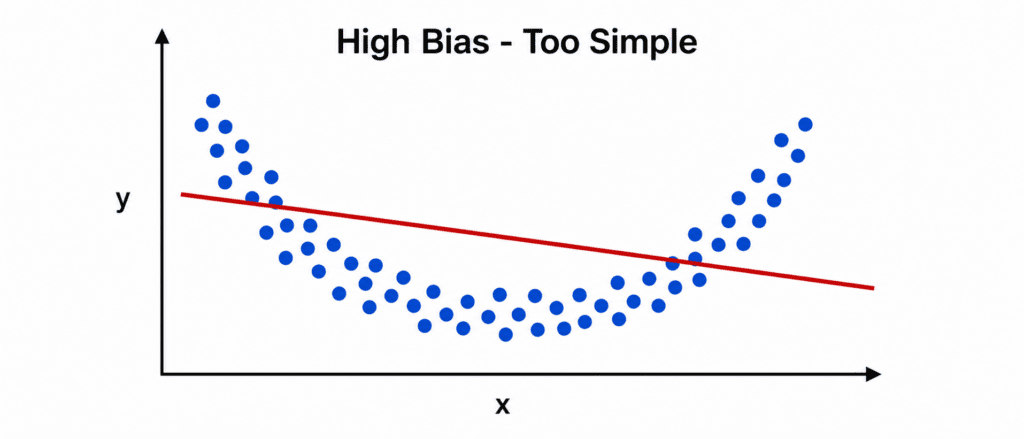

Definition: Bias is the error from thinking the world is simpler than it really is.

Example: You assume house prices depend only on house size. So you draw a straight line. But in reality, location, age, condition, and season also matter. Your straight line will never be accurate. Your model has high bias.

Characteristics of High Bias:

| Sign | What it means |

|---|---|

| Model is too simple | Linear model for curved data |

| Training error is high | Cannot learn even the training data |

| Validation error is also high | Generalization is also poor |

| Both errors are close | No gap because both are bad |

High Bias = Underfitting

Real World Example: Trying to predict a child’s height at age 18 using only their height at age 1. Too little information. Your predictions will always be wrong.

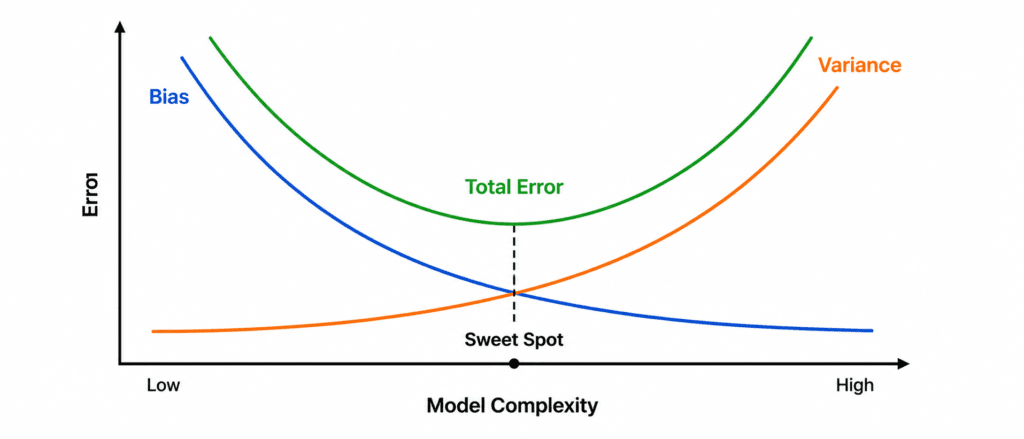

The Tradeoff Explained (The Core) #

You cannot have both low bias and low variance.

| Model Complexity | Bias | Variance | Total Error |

|---|---|---|---|

| Very Simple (e.g., Linear) | Very High | Very Low | High |

| Simple | High | Low | Medium |

| Medium (Sweet Spot) | Medium | Medium | Lowest |

| Complex | Low | High | Medium |

| Very Complex (Deep Tree) | Very Low | Very High | High |

Why the tradeoff exists:

- Simple models make strong assumptions (high bias). They are stable (low variance).

- Complex models make fewer assumptions (low bias). They are unstable (high variance).

You must choose. There is no perfect model with zero bias and zero variance.

Real Example: House Price Prediction #

You are building a model to predict house prices.

| Model | Bias | Variance | Result |

|---|---|---|---|

| Linear Regression | High (assumes straight line) | Low (stable) | Underfits. Misses important patterns. |

| Shallow Decision Tree (depth 3) | Medium | Medium | Decent but could be better. |

| Deep Decision Tree (depth 50) | Very Low (can fit any pattern) | Very High (changes with tiny data changes) | Overfits. Perfect on training, useless on new houses. |

| Random Forest (100 trees) | Low | Medium-Low | ✅ Best. Balances bias and variance. |

Why Random Forest wins:

- Individual trees have low bias but high variance

- Averaging 100 trees reduces variance

- But keeps low bias from individual trees

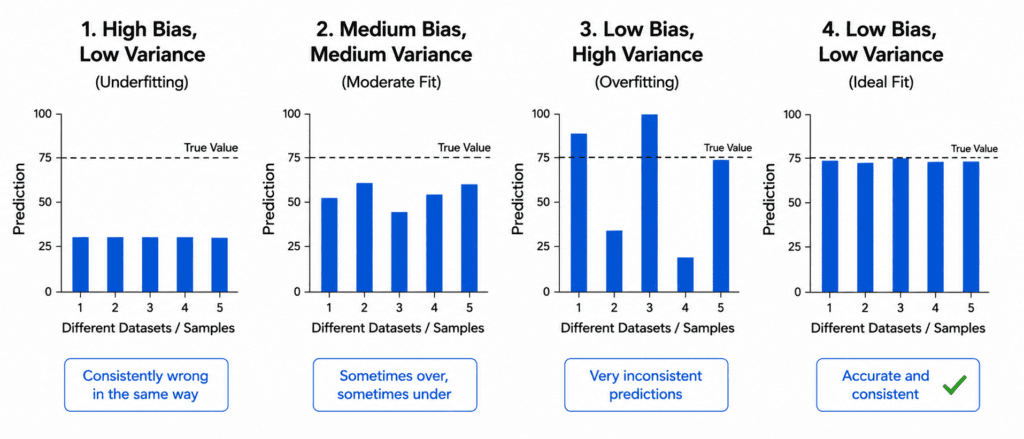

The Relationship to Overfitting and Underfitting #

| Condition | Bias | Variance | ML Term |

|---|---|---|---|

| Model too simple | High | Low | Underfitting |

| Model too complex | Low | High | Overfitting |

| Perfect balance | Medium | Medium | Good Fit |

Simple Translation:

| Problem | In Bias-Variance terms | Simple Fix |

|---|---|---|

| Underfitting | High Bias | Increase model complexity |

| Overfitting | High Variance | Decrease model complexity or add more data |

How to Diagnose Bias vs Variance #

Look at your learning curves.

High Bias (Underfitting):

- Training error starts high and stays high

- Validation error also high

- Both curves are close together

- Neither reaches desired performance

High Variance (Overfitting):

- Training error goes very low

- Validation error starts high and may even increase

- Large gap between curves

- Gap gets wider as training progresses

Sweet Spot:

- Training error low

- Validation error low

- Small gap between curves

- Both curves flatten at good value

How to Fix Bias Problems (Underfitting) #

| Fix | Why it works |

|---|---|

| Add more features | Gives model more information |

| Use polynomial features | Captures non-linear relationships |

| Increase model complexity | Switch from linear to random forest to neural network |

| Reduce regularization | Less constraint on weights |

| Train longer | For iterative models like neural networks |

# Too simple (High Bias) from sklearn.linear_model import LinearRegression model = LinearRegression() # Better (Lower Bias) from sklearn.ensemble import RandomForestRegressor model = RandomForestRegressor(n_estimators=100, max_depth=10)

How to Fix Variance Problems (Overfitting) #

| Fix | Why it works |

|---|---|

| Get more training data | Model sees more examples, cannot memorize |

| Simplify model | Fewer parameters, less flexibility to fit noise |

| Add regularization | Penalizes complex patterns |

| Early stopping | Stops before model starts fitting noise |

| Reduce features | Remove irrelevant or noisy features |

| Ensemble methods | Averaging reduces variance |

# Too complex (High Variance)

from sklearn.tree import DecisionTreeRegressor

model = DecisionTreeRegressor(max_depth=None)

# Better (Lower Variance)

model = DecisionTreeRegressor(

max_depth=5, # Simpler tree

min_samples_split=10, # Regularization

min_samples_leaf=5 # Regularization

)

# Even Better (Ensemble reduces variance)

from sklearn.ensemble import RandomForestRegressor

model = RandomForestRegressor(n_estimators=100, max_depth=5)

Quick Quiz #

Q1: Your training error is 30%. Validation error is 32%. What is the problem?

A1: High Bias (Underfitting). Both errors are high and close together.

Q2: Your training error is 2%. Validation error is 35%. What is the problem?

A2: High Variance (Overfitting). Large gap between training and validation.

Q3: You increase model complexity. Training error drops from 40% to 20%. Validation error drops from 42% to 25%. Did you improve?

A3: Yes. You reduced bias. Both errors went down. Gap remained small. You moved toward the sweet spot.

Q4: You increase model complexity further. Training error drops from 20% to 5%. Validation error increases from 25% to 40%. What happened?

A4: You started overfitting. You increased variance too much. Go back to previous complexity level.

Q5: You have unlimited computing power and unlimited data. Can you eliminate both bias and variance?

A5: No. Irreducible error (noise in data) will always remain. Also, with infinite data, you can reduce variance to near zero, but bias depends on your model choice. Choose a model that can represent the true function.

The Wisdom in One Sentence #

“Simple models are wrong but stable. Complex models are right but fragile. Your job is to be wrong enough to be stable, right enough to be useful.”