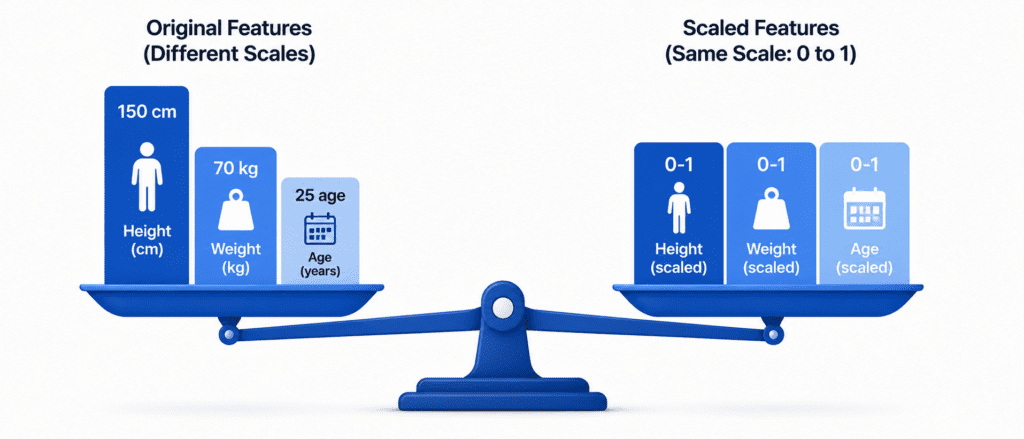

“Imagine comparing a person’s height in centimeters with their weight in kilograms. The numbers are on completely different scales. One is around 170. The other is around 70. Most ML models get confused. They think the bigger number is more important. Feature scaling fixes this. It puts all features on the same playing field.”

What is Feature Scaling? #

Transforming numerical features so they have similar ranges.

Why it matters:

| Without Scaling | With Scaling |

|---|---|

| Distance-based models (KNN, SVM) favor features with larger numbers | All features contribute equally |

| Gradient descent takes longer to converge | Faster convergence |

| Regularization penalizes features unfairly | Fair penalization |

Which models need scaling:

| Model Type | Needs Scaling? | Why |

|---|---|---|

| Linear Regression | ✅ Yes | Gradient descent performance |

| Logistic Regression | ✅ Yes | Same reason as above |

| SVM | ✅ Yes | Distance-based |

| KNN | ✅ Yes | Distance-based |

| Neural Networks | ✅ Yes | Gradient descent |

| PCA | ✅ Yes | Variance-based |

| Decision Trees | ❌ No | Not distance-based |

| Random Forest | ❌ No | Not distance-based |

| Gradient Boosting | ❌ No | Not distance-based |

The Three Main Scalers #

| Scaler | What it does | Formula | Best for |

|---|---|---|---|

| MinMaxScaler | Scales to fixed range (default 0 to 1) | (x – min) / (max – min) | Known bounded ranges (pixels 0-255) |

| StandardScaler | Makes mean=0, std=1 | (x – mean) / std | Most ML models (default choice) |

| RobustScaler | Uses median and quartiles | (x – median) / IQR | Data with outliers |

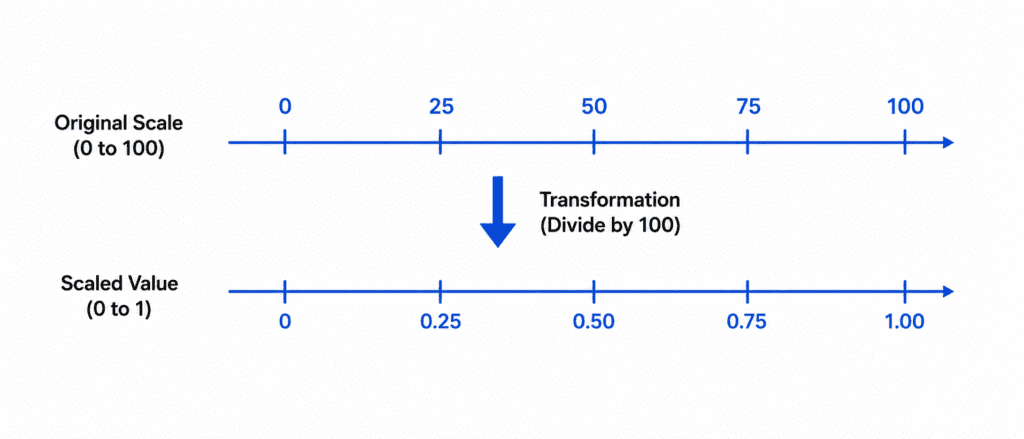

1. MinMaxScaler (Range Scaling) #

What it does: Shrinks or expands values to fit in a range (default 0 to 1).

The Formula:

X_scaled = (X - X_min) / (X_max - X_min)

Example:

| Original | Calculation | Scaled |

|---|---|---|

| 0 | (0 – 0) / (100 – 0) | 0.00 |

| 25 | (25 – 0) / (100 – 0) | 0.25 |

| 50 | (50 – 0) / (100 – 0) | 0.50 |

| 75 | (75 – 0) / (100 – 0) | 0.75 |

| 100 | (100 – 0) / (100 – 0) | 1.00 |

When to use:

- Pixel values (always 0 to 255)

- When you need values in exact range (e.g., neural network tanh activation wants -1 to 1)

- When data has NO outliers (min and max are meaningful)

When NOT to use:

- Data has outliers (one extreme value will squash all other values)

from sklearn.preprocessing import MinMaxScaler scaler = MinMaxScaler(feature_range=(0, 1)) # Default X_scaled = scaler.fit_transform(X) # For range -1 to 1 (good for neural networks) scaler = MinMaxScaler(feature_range=(-1, 1)) X_scaled = scaler.fit_transform(X)

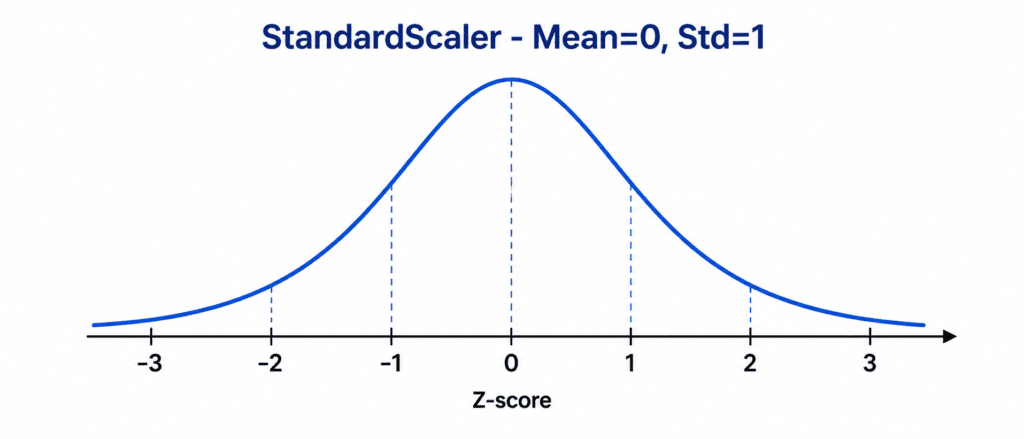

2. StandardScaler (Z-Score Normalization) #

What it does: Centers data at mean=0, scales to standard deviation=1.

The Formula:

X_scaled = (X - μ) / σ Where: μ = mean (average) σ = standard deviation

Example:

| Original | (X – mean) | Divided by std | Scaled |

|---|---|---|---|

| 10 | -30 | / 20 | -1.5 |

| 30 | -10 | / 20 | -0.5 |

| 40 (mean) | 0 | / 20 | 0 |

| 50 | 10 | / 20 | 0.5 |

| 70 | 30 | / 20 | 1.5 |

Result: mean = 0, standard deviation = 1

When to use:

- Most ML models (default choice)

- Data follows (or roughly follows) normal distribution

- You do not know the expected range of values

from sklearn.preprocessing import StandardScaler

scaler = StandardScaler()

X_scaled = scaler.fit_transform(X)

# Access the learned parameters

print(f"Mean: {scaler.mean_}")

print(f"Standard Deviation: {scaler.scale_}")

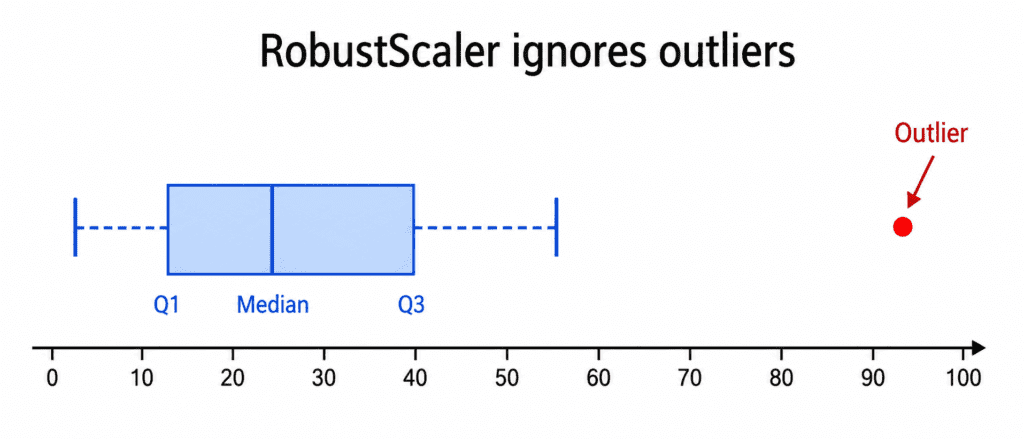

3. RobustScaler (Outlier Resistant) #

What it does: Uses median and Interquartile Range (IQR). Outliers do not affect it.

The Formula:

X_scaled = (X - median) / IQR Where: IQR = Q3 - Q1 (75th percentile - 25th percentile)

Why it works:

| Statistic | Affected by outliers? |

|---|---|

| Mean | ✅ Yes (one extreme value pulls mean) |

| Standard Deviation | ✅ Yes (extreme values increase it) |

| Median | ❌ No (middle value, unaffected) |

| IQR | ❌ No (based on percentiles) |

Example with Outlier:

Dataset: [10, 12, 14, 16, 18, 1000]

| Scaler | Result | Problem |

|---|---|---|

| MinMaxScaler | Everything between 0-1, but normal values crushed near 0 | ✅ |

| StandardScaler | Mean pulled by 1000, normal values become negative | ✅ |

| RobustScaler | Outlier ignored, normal values scaled properly | ✅ |

When to use:

- Data has outliers you cannot remove

- You want to be robust to extreme values

from sklearn.preprocessing import RobustScaler scaler = RobustScaler() X_scaled = scaler.fit_transform(X)

from sklearn.preprocessing import RobustScaler scaler = RobustScaler() X_scaled = scaler.fit_transform(X)

Comparison Table #

| Feature | MinMaxScaler | StandardScaler | RobustScaler |

|---|---|---|---|

| Range | Fixed (0 to 1 or -1 to 1) | Unlimited (approx -3 to +3) | Unlimited |

| Uses mean | No | Yes | No |

| Uses median | No | No | Yes |

| Affected by outliers | Yes (very sensitive) | Yes | No (robust) |

| Preserves zero | No (if min > 0) | Yes | Yes |

| Default choice | For images, bounded data | ✅ For most ML models | For data with outliers |

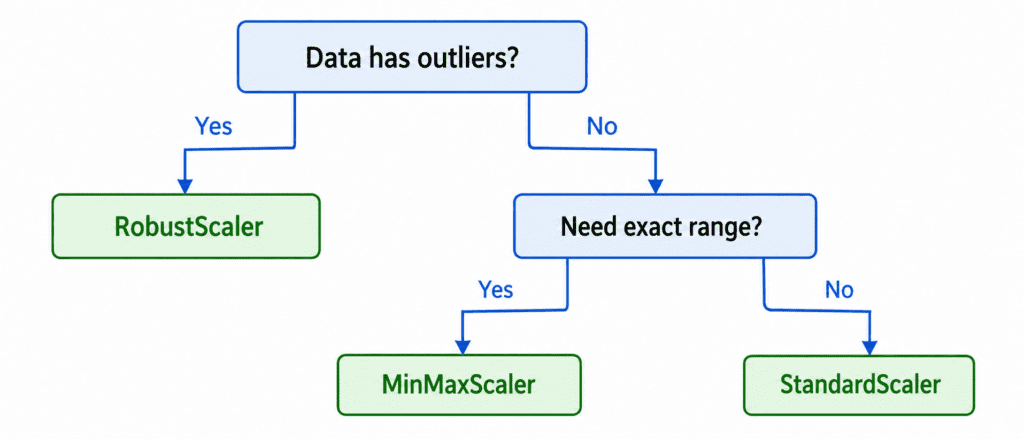

Which One Should You Choose? (Decision Guide) #

Simple Rule of Thumb:

| Scenario | Choice |

|---|---|

| Not sure what to use | StandardScaler ✅ |

| Data has obvious outliers | RobustScaler ✅ |

| Working with images (pixels 0-255) | MinMaxScaler (0 to 1) ✅ |

| Neural network with tanh activation | MinMaxScaler (-1 to 1) ✅ |

| Decision trees or random forests | No scaling needed ✅ |

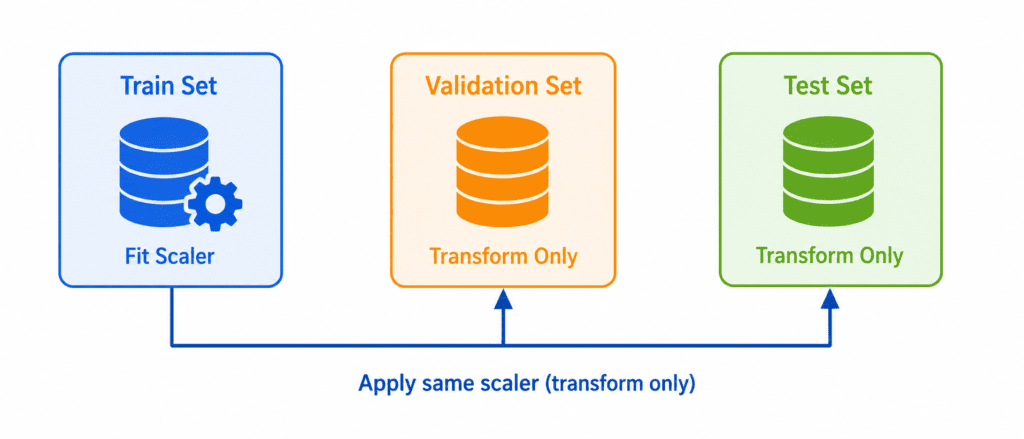

Important Rules (Must Know) #

| # | Rule |

|---|---|

| 1 | Fit on training data only. Never fit on validation or test set. |

| 2 | Transform test data using training scaler. Do not refit. |

| 3 | Scale before train-test split (fit on train, transform both). |

| 4 | Scale target variable only for regression (sometimes helpful). |

| 5 | Do not scale categorical features. Only numerical features. |

Correct Way:

# CORRECT scaler = StandardScaler() X_train_scaled = scaler.fit_transform(X_train) # Fit on train only X_test_scaled = scaler.transform(X_test) # Transform test using same scaler X_val_scaled = scaler.transform(X_val) # Transform validation using same scaler

Wrong Way:

# WRONG - Do not do this X_train_scaled = scaler.fit_transform(X_train) X_test_scaled = scaler.fit_transform(X_test) # WRONG! Different scaling!

Complete Code

import numpy as np

import pandas as pd

from sklearn.preprocessing import StandardScaler, MinMaxScaler, RobustScaler

# Sample data (age, salary, years of experience)

data = np.array([

[25, 50000, 2],

[30, 60000, 5],

[35, 1000000, 8], # Outlier in salary

[40, 80000, 12],

[45, 90000, 15]

])

print("Original Data:")

print(data)

# StandardScaler

std_scaler = StandardScaler()

data_std = std_scaler.fit_transform(data)

print("\nStandardScaler:")

print(data_std.round(2))

# MinMaxScaler

minmax_scaler = MinMaxScaler()

data_minmax = minmax_scaler.fit_transform(data)

print("\nMinMaxScaler (0 to 1):")

print(data_minmax.round(2))

# RobustScaler (notice salary outlier handled)

robust_scaler = RobustScaler()

data_robust = robust_scaler.fit_transform(data)

print("\nRobustScaler (outlier resistant):")

print(data_robust.round(2))Quick Quiz #

Q1: You are building a KNN classifier. Your features are age (18-90), salary (30k-200k), and number of children (0-5). Which scaler should you use?

A1: StandardScaler. KNN is distance-based. Different scales will bias the model. StandardScaler is the safe default.

Q2: Your salary column has one person earning 10million.Everyoneelseearns40k-80k. Which scaler should you use?

A2: RobustScaler. The outlier will ruin StandardScaler (mean pulled up) and MinMaxScaler (all normal values crushed near 0).

Q3: You are training a neural network with tanh activation (output range -1 to 1). Your pixel values are 0 to 255. Which scaler?

A3: MinMaxScaler with feature_range=(-1, 1). Tanh works best with inputs in that range.

Q4: You accidentally fit StandardScaler on the entire dataset before train-test split. Is this wrong?

A4: Yes. Information from test data leaked into training. Your validation score will be too optimistic. Fit on train only.