Overfitting vs Underfitting #

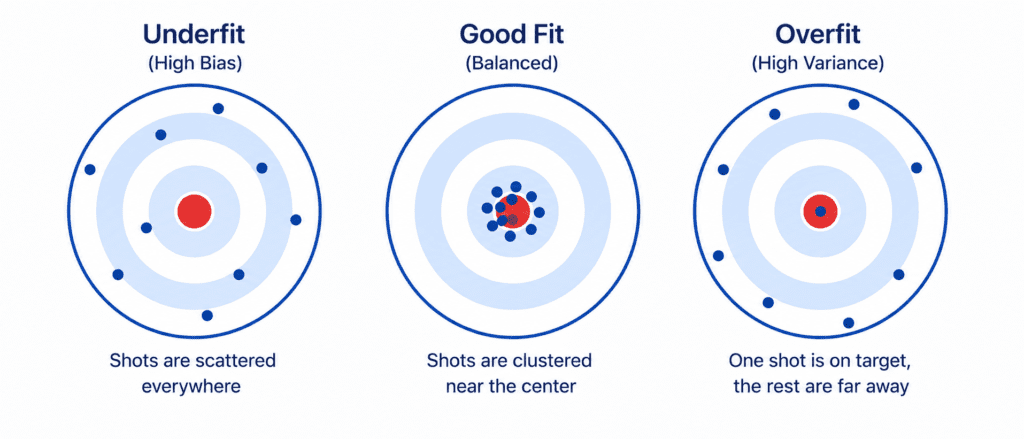

In machine learning there are the two most common hurdles every developer faces. Your model is like a student. It can either memorize the answers (overfitting) or not study enough (underfitting). Neither is good. You want the sweet spot.

The Simple Explanation #

| Problem | What Happens | Analogy |

|---|---|---|

| Underfitting | Model is too weak. Cannot learn the pattern. | Student who studies 5 minutes for a big exam. Fails. |

| Overfitting | Model is too complex. Learns noise, not signal. | Student who memorizes answers but does not understand. Fails on new questions. |

| Good Fit | Model learns the real pattern. Ignores noise. | Student who understands concepts. Scores well anywhere. |

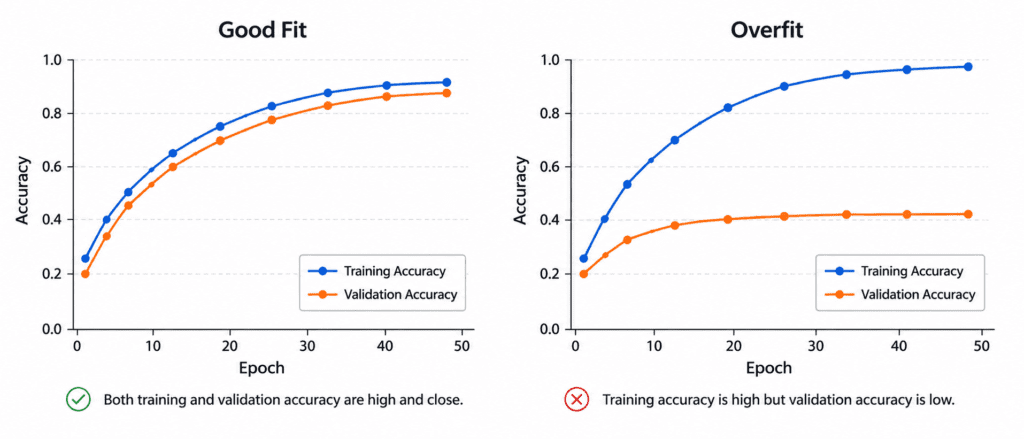

How to Diagnose (Look at the Gap) #

| Training Score | Validation Score | Gap | Diagnosis |

|---|---|---|---|

| Low | Low | Small | ❌ Underfitting |

| Very High | Low | Large | ❌ Overfitting |

| High | High | Small | ✅ Good Fit |

Simple Rule:

- Both bad = Underfitting

- Training great, validation bad = Overfitting

- Both good = Perfect

The Cures #

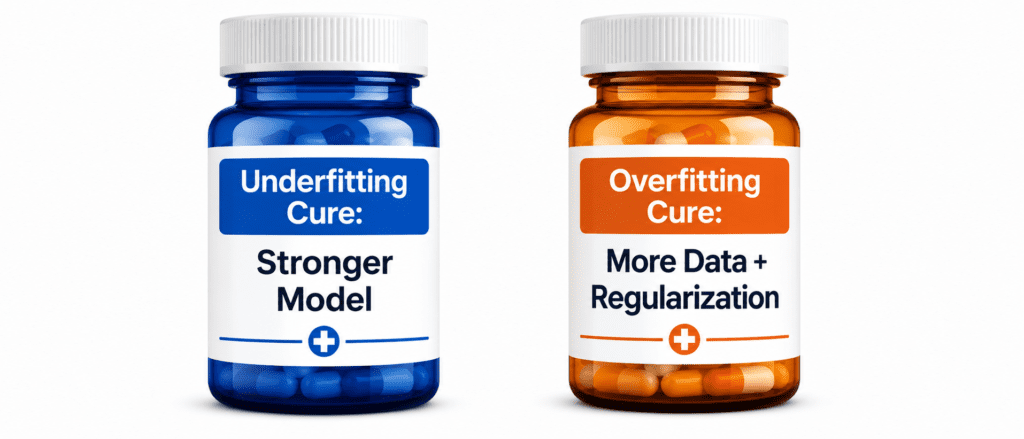

For Underfitting (Model is too weak):

| Cure | How |

|---|---|

| Use stronger model | Linear → Random Forest → Neural Network |

| Add more features | Polynomial features, more columns |

| Reduce regularization | Lower alpha or C value |

For Overfitting (Model is too complex):

| Cure | How |

|---|---|

| Get more data | Collect more samples |

| Simplify model | Reduce layers, depth, neurons |

| Add regularization | L1 (Lasso), L2 (Ridge), Dropout |

| Early stopping | Stop training when validation stops improving |

| Reduce features | Remove useless columns |

One Simple Example #

Problem: Predict house prices.

| Model | Training Error | Validation Error | Issue |

|---|---|---|---|

| Linear Regression | $80,000 | $82,000 | Underfitting (too simple) |

| Deep Neural Network | $5,000 | $75,000 | Overfitting (memorized) |

| Random Forest (tuned) | $35,000 | $38,000 | ✅ Good fit |

The Goldilocks Rule #

- Too simple model = Underfit ❌

- Too complex model = Overfit ❌

- Just right model = Good Fit ✅

You want the “just right” model.

Quick Quiz #

Q1: Training accuracy = 99%, Validation accuracy = 55%. Problem?

A1: Overfitting.

Q2: Training accuracy = 60%, Validation accuracy = 58%. Problem?

A2: Underfitting.

Q3: How to fix overfitting?

A3: More data, simplify model, add regularization, early stopping.

Key Takeaways (3 Lines) #

- Underfitting = Model too weak. Cure: Make it stronger.

- Overfitting = Model too complex. Cure: Simplify or add more data.

- Compare training and validation scores. Big gap = overfitting. Both low = underfitting.