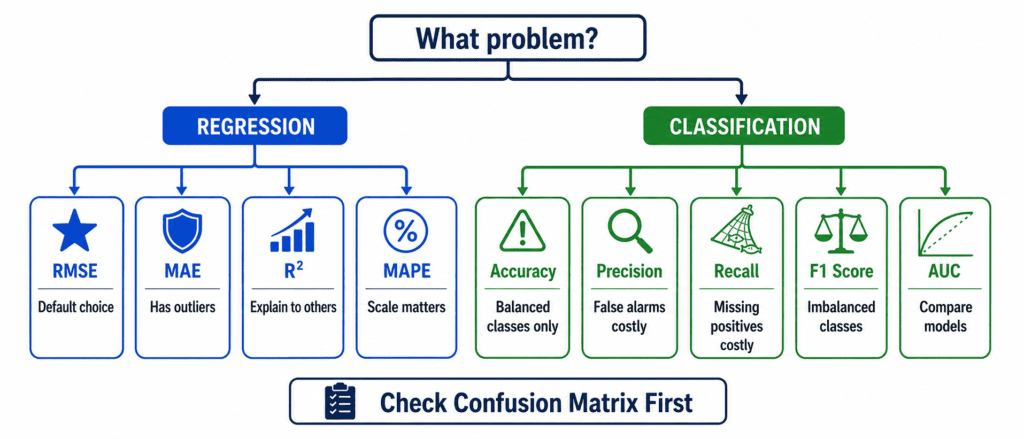

No single metric works for every problem. Your choice depends on three things: your data, your goal, and the cost of being wrong.

Regression Problems (Predicting Numbers) #

Use RMSE as your default. It is the standard. It penalizes large errors more than small ones. Most people understand it.

Use MAE when you have many outliers. RMSE gets distorted by extreme values. MAE stays stable. If your data has crazy outliers you cannot remove, pick MAE.

Use R² when explaining to non-technical people. “My model explains 85% of the variation” sounds better than “My average error is $50,000.” Both are true. R² sounds impressive.

Use MAPE when errors should scale with value. Predicting a 10itemoffby5 is 50% error. Predicting a 1,000itemoffby5 is 0.5% error. MAPE captures this difference.

Classification Problems (Predicting Categories) #

Use Accuracy only when classes are perfectly balanced. If you have 50% spam and 50% not spam, accuracy works. Otherwise, ignore it.

Use Precision when false alarms are costly. Spam filter marks a good email as spam → you miss something important. Recommendation system shows bad suggestions → user leaves. In these cases, be sure before you say YES.

Use Recall when missing a positive is costly. Cancer test misses a sick patient → they die. Fraud detection misses fraud → bank loses money. Security system misses a threat → disaster. Catch everything. Worry about false alarms later.

Use F1 Score when you need balance. Most real problems care about both precision and recall. F1 gives you one number that respects both. Also use F1 when classes are imbalanced. It handles 99% to 1% ratios much better than accuracy.

Use AUC when comparing two models. You do not know the right threshold yet. AUC tells you which model separates classes better at every possible threshold. Higher AUC wins.

The Golden Rule #

Always look at the confusion matrix before trusting any single metric. One number hides the truth. Four numbers tell the full story.

Quick Summary #

| Your Situation | Use This |

|---|---|

| Default regression | RMSE |

| Data has outliers | MAE |

| Explain to boss | R² or MAPE |

| Balanced classes | Accuracy |

| False alarms hurt | Precision |

| Missing positives kills | Recall |

| Imbalanced classes | F1 Score |

| Comparing models | AUC |

One Final Truth #

No metric is perfect. Pick one that matches your business goal. If catching fraud saves 1000percatchbutfalsealarmscost10 each, optimize recall. If false alarms cost 1000andmissedfraudcosts10, optimize precision.

Let the cost decide the metric.